AlphaLab: Autonomous Multi-Agent Research Across Optimization Domains with Frontier LLMs

arXiv cs.AI / 4/13/2026

💬 OpinionDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- AlphaLab is an autonomous multi-agent research system that, from only a dataset and a natural-language goal, automates the full experimental cycle with no human intervention using frontier LLM agent capabilities.

- The pipeline proceeds through three phases: domain exploration and code/report generation, self-adversarial construction and validation of its evaluation framework, and large-scale GPU experimentation via a Strategist/Worker loop.

- Domain-specific behaviors are implemented through model-generated adapters, allowing the same end-to-end system to work across qualitatively different optimization and research domains without manual pipeline changes.

- In evaluations using GPT-5.2 and Claude Opus 4.6 across CUDA kernel optimization, LLM pretraining, and traffic forecasting, AlphaLab reports substantial gains (e.g., 4.4× faster GPU kernels, 22% lower validation loss, and 23–25% better forecasting), with each model finding different solutions.

- The authors release the code and emphasize that multi-model campaigns improve search coverage, while maintaining a persistent “playbook” that acts as online prompt optimization from accumulated knowledge.

Related Articles

I built the missing piece of the MCP ecosystem

Dev.to

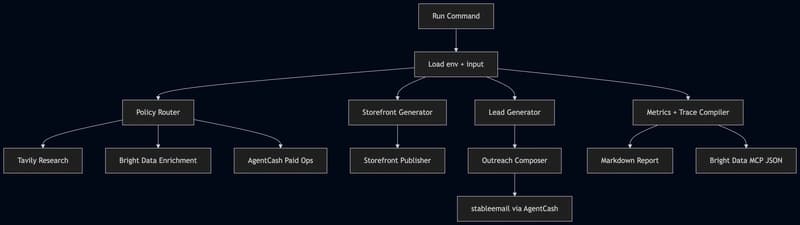

Building an Agentic Commerce Router with TypeScript, AgentCash, Bright Data, Tavily, OpenAI, and Featherless

Dev.to

When Agents Go Wrong: AI Accountability and the Payment Audit Trail

Dev.to

Google Gemma 4 Review 2026: The Open Model That Runs Locally and Beats Closed APIs

Dev.to

OpenClaw Deep Dive Guide: Self-Host Your Own AI Agent on Any VPS (2026)

Dev.to