Demystifying the unreasonable effectiveness of online alignment methods

arXiv cs.LG / 4/21/2026

📰 NewsSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

- The paper examines why iterative, greedy online alignment methods (e.g., online RLHF and online DPO) perform much better in practice than KL-regularized regret theory suggests.

- It argues that the mismatch comes from the regret metric: KL-regularized regret mixes the learning/statistical cost with exploration randomness created by a softened (regularized) training policy.

- To disentangle these effects, the authors use a decision-centric “temperature-zero” (top-ranked at inference) regret criterion.

- Under this decision-focused criterion, they prove that standard greedy online alignment methods achieve constant (O(1)) cumulative regret.

- The results offer a clearer theoretical explanation for the empirical effectiveness of greedy alignment approaches by separating best-response identification from regularization-induced stochasticity.

Related Articles

The 2026 Forbes AI 50 List

Reddit r/artificial

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

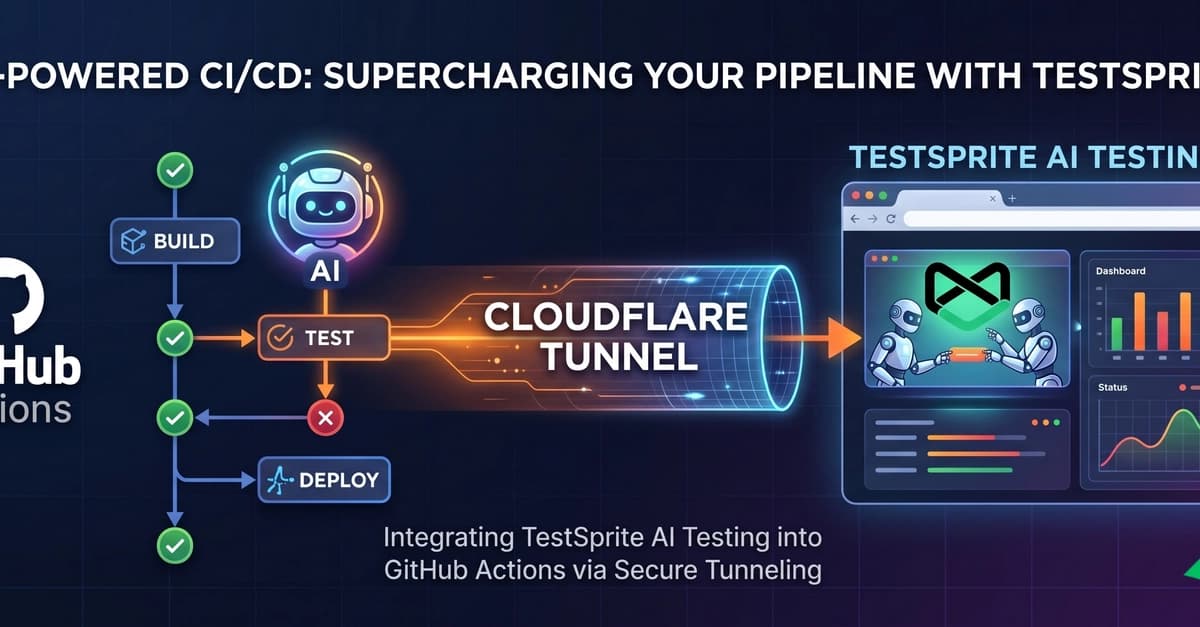

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

Claude and I aren't vibing at all

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to