Bayesian Hierarchical Invariant Prediction

arXiv stat.ML / 4/7/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper introduces Bayesian Hierarchical Invariant Prediction (BHIP) as a Bayesian re-framing of Invariant Causal Prediction (ICP) using a hierarchical Bayes formulation.

- BHIP tests whether causal mechanisms remain invariant across heterogeneous data while explicitly leveraging hierarchical structure to improve computational scalability with more predictors.

- Because BHIP is Bayesian, it supports incorporating prior information, which ICP-style methods may not directly provide in the same way.

- The authors evaluate BHIP on synthetic and real-world datasets and find evidence that it can serve as an alternative inference approach to ICP and related methods.

- Overall, the work aims to make invariant causal inference more scalable and more flexible by combining invariance testing with Bayesian modeling.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

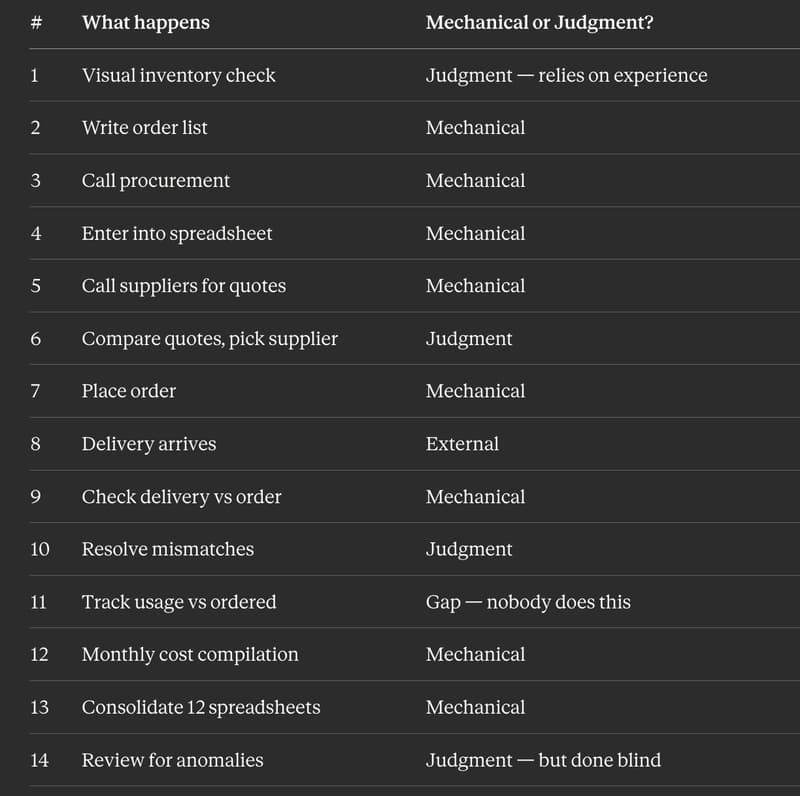

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to