Attention Sinks in Massively Multilingual Neural Machine Translation:Discovery, Analysis, and Mitigation

arXiv cs.LG / 5/5/2026

📰 NewsIdeas & Deep AnalysisModels & Research

Key Points

- The paper identifies a systematic artifact in cross-attention analysis for the NLLB-200 multilingual NMT model: “attention sinks” where non-content tokens (EOS tokens, language tags, and punctuation) absorb 83%–91% of total cross-attention mass.

- Because these sinks skew attention distributions, raw cross-attention metrics can severely underestimate content-level similarity by nearly half (36.7% raw vs. 70.7% after filtering), making many uncorrected interpretability studies unreliable.

- The authors trace the effect to a vocabulary-design causal mechanism rather than position bias, extending prior LLM attention-sink findings to NMT.

- They validate a content-only filtering and renormalization method, showing the artifact is universal across African and non-African language benchmarks and that corrected analyses recover meaningful signals (mode gaps, language-family clustering, and a “Somali paradox”).

- The study releases a filtering toolkit and corrected datasets to enable reproducible, more trustworthy interpretability research for multilingual NMT.

Related Articles

The 55.6% problem: why frontier LLMs fail at embedded code

Dev.to

Four CVEs in a week, all the same shape: when agents execute LLM-generated code

Dev.to

Healthcare AI Is Absorbing Institutional Knowledge It Can't Actually Hold

Reddit r/artificial

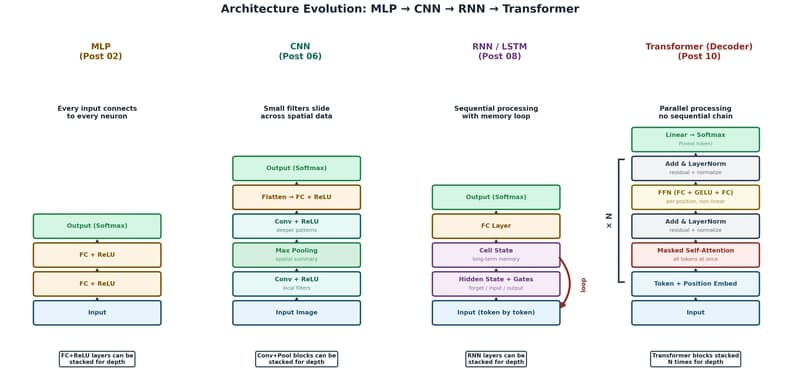

The Transformer: The Architecture Behind Modern AI

Dev.to

Foundational Models Defining a New Era in Vision: A Survey and Outlook

Dev.to