When Spike Sparsity Does Not Translate to Deployed Cost: VS-WNO on Jetson Orin Nano

arXiv cs.LG / 4/21/2026

📰 NewsDeveloper Stack & InfrastructureSignals & Early TrendsModels & Research

Key Points

- The paper evaluates whether spike sparsity in spiking neural operator models actually lowers latency and energy when deployed on a Jetson Orin Nano using standard edge-GPU software stacks.

- In the “reference-aligned” path, VS-WNO shows clear algorithmic sparsity, with mean spike rates dropping from 54.26% in the first spiking layer to 18.15% in the fourth.

- In a more “deployment-style” request path, however, that sparsity does not translate into savings: VS-WNO achieves 59.6 ms latency and 228.0 mJ dynamic energy per inference versus dense WNO’s 53.2 ms and 180.7 mJ.

- Profiling with Nsight Systems suggests the runtime remains launch-dominated and does not effectively suppress dense computation as spike activity decreases, explaining why cost does not improve.

- The authors conclude that spike sparsity is observable but insufficient for reducing deployed cost on this Jetson-class GPU stack because sparse execution is not realized by the software runtime.

Related Articles

The 2026 Forbes AI 50 List

Reddit r/artificial

Add cryptographic authorization to AI agents in 5 minutes

Dev.to

Building a website with Replit and Vercel

Dev.to

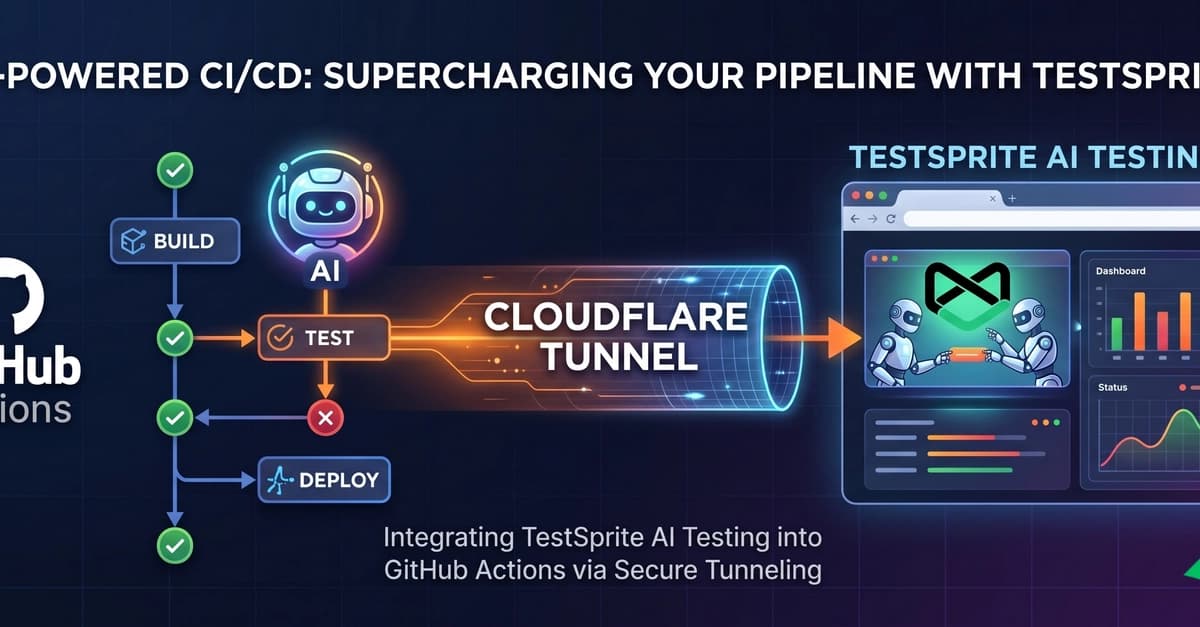

Supercharging Your CI/CD: Integrating TestSprite AI Testing with GitHub Actions

Dev.to

The ULTIMATE Guide to AI Voice Cloning: RVC WebUI (Zero to Hero)

Dev.to