Multi-Faceted Self-Consistent Preference Alignment for Query Rewriting in Conversational Search

arXiv cs.CL / 4/9/2026

💬 OpinionIdeas & Deep AnalysisModels & Research

Key Points

- The paper addresses Conversational Query Rewriting (CQR) by arguing that optimizing rewrites in isolation is insufficient because rewrites should account for downstream effects on retrieval and response generation.

- It introduces MSPA-CQR, which builds self-consistent preference-alignment data across three dimensions—rewriting, passage retrieval, and response—to produce more diverse rewritten queries.

- The method uses “prefix guided multi-faceted direct preference optimization” to learn and reconcile preferences from the three dimensions during training.

- Experiments reported in the abstract indicate the approach improves CQR performance in both in-distribution and out-of-distribution settings, suggesting better robustness.

Related Articles

Research with ChatGPT

Dev.to

Silicon Valley is quietly running on Chinese open source models and almost nobody is talking about it

Reddit r/LocalLLaMA

Why AI Product Quality Is Now an Evaluation Pipeline Problem, Not a Model Problem

Dev.to

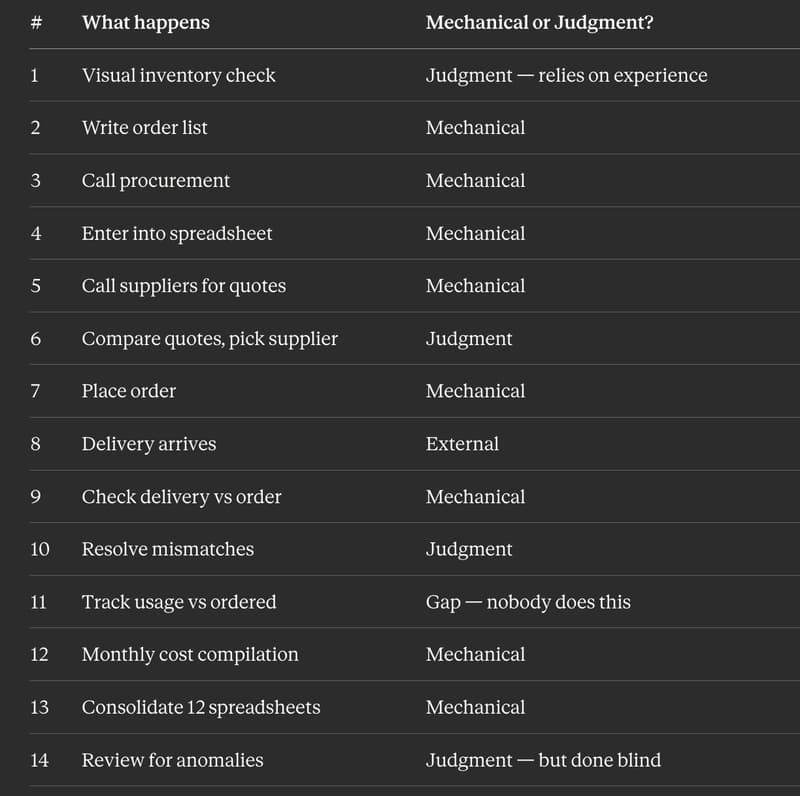

I Replaced 12 Kitchen Managers Guessing "How Much Chicken Do We Need" With 3 ML Models. Here's the Entire Architecture.

Dev.to

AI Model Router API - REST + MCP, Free Tier

Dev.to