| submitted by /u/Ok-Type-7663 [link] [comments] |

New 31M and 14M Pythia models???!!!

Reddit r/LocalLLaMA / 4/26/2026

💬 OpinionSignals & Early TrendsModels & Research

Key Points

- The article is a Reddit post raising the question of whether new Pythia models were released since February.

- It specifically mentions two model sizes—31M and 14M—suggesting potential updates or variants within the Pythia family.

- The post notes that Pythia is relatively old, so new releases would be notable for users following small-scale language models.

- The information appears to come from a shared link rather than an official announcement, implying it may be a rumor or early signal pending confirmation.

- The key takeaway is heightened attention from the community to possible new lightweight Pythia checkpoints that could be useful for local or resource-constrained experimentation.

Related Articles

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

How I tracked which AI bots actually crawl my site

Dev.to

Anthropic created a test marketplace for agent-on-agent commerce

TechCrunch

If I work on something in codex, and future models are trained on my interactions, does that mean the next model release will be able to code my project for other users?

Reddit r/artificial

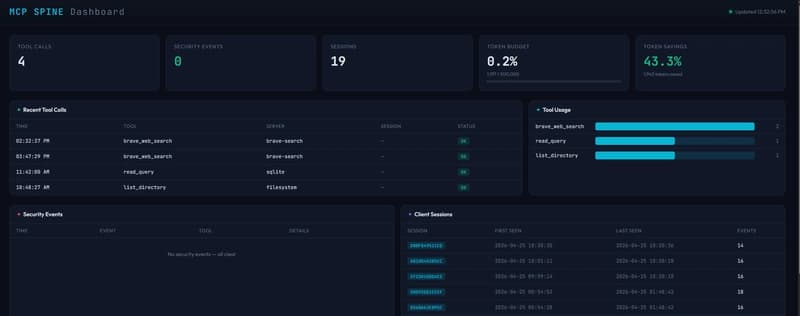

MCP Spine v0.2.5: I Built a Full Middleware Stack for MCP Tool Calls

Dev.to