| Hi everyone, I’m the maintainer of Box — a fork of Google’s AI Edge Gallery that I’ve been extending into a fully offline AI assistant for Android. Full disclosure: I built this project. It runs entirely on-device (no cloud, no accounts, no external inference), and combines multiple local inference backends in a single app. What I’ve been experimenting withThe goal was to see how far a fully offline mobile AI stack could be pushed using:

All running on Android with hardware acceleration where available (GPU / NPU / TPU). Current capabilities

Architecture focusWhat I’ve found interesting while building this:

Repo (for reference)Why I’m posting this hereI’m mainly sharing this for feedback from people also working on local inference systems, especially around:

Not trying to push adoption — more interested in technical critique than anything else. Happy to answer questions or go deeper into any part of the stack if useful. [link] [comments] |

Hybrid on-device inference on Android: llama.cpp + LiteRT + NPU/GPU routing

Reddit r/LocalLLaMA / 5/2/2026

💬 OpinionDeveloper Stack & InfrastructureTools & Practical UsageModels & Research

Key Points

- The maintainer describes “Box,” a fork of Google’s AI Edge Gallery, built to run a fully offline AI assistant on Android with no cloud inference or accounts.

- The project experiments with a hybrid on-device stack combining llama.cpp (GGUF LLM), whisper.cpp (offline STT), stable-diffusion.cpp (image generation), and LiteRT for execution.

- It enables multimodal capabilities including streaming voice-to-voice conversation and live camera frame + natural-language Q&A, while also supporting local document context ingestion and custom GGUF model import.

- A key architectural takeaway is that hybrid LiteRT + llama.cpp inference performs better than expected on newer Snapdragon/Pixel NPUs, and that model routing (CPU/GPU/NPU/TPU) often matters more than raw model size.

- The author notes that for many mobile scenarios, memory usage and persistence become the main bottlenecks before compute, and they’re seeking technical feedback on quantization, runtime routing, multimodal pipelines, and performance tuning.

Related Articles

Black Hat USA

AI Business

Every handle invocation on BizNode gets a WFID — a universal transaction reference for accountability. Full audit trail,...

Dev.to

I tracked my referral sources for 30 days. AI chatbots are beating Google.

Dev.to

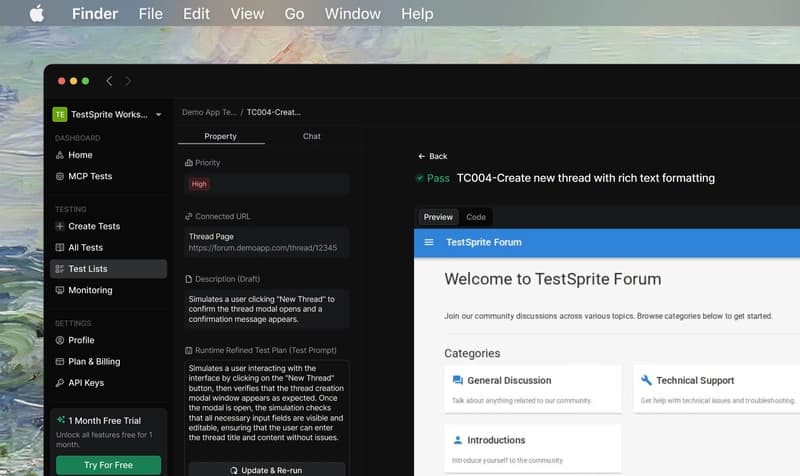

TestSprite: Review Mendalam dari Developer Indonesia — Lokalisasi, Tanggal, dan Mata Uang Rupiah

Dev.to

When AI Agents Trade Autonomously: Building Economic Actors That Never Sleep

Dev.to