COEVO: Co-Evolutionary Framework for Joint Functional Correctness and PPA Optimization in LLM-Based RTL Generation

arXiv cs.AI / 4/17/2026

📰 NewsDeveloper Stack & InfrastructureSignals & Early TrendsModels & Research

Key Points

- The paper argues that current LLM-based RTL generation methods typically treat functional correctness and PPA (power, performance, area) optimization as separate stages, causing promising partially-correct designs to be discarded.

- It introduces COEVO, a co-evolutionary framework that optimizes correctness and PPA together in a single evolutionary loop using a continuously scored correctness dimension.

- COEVO improves guidance for the search by using an enhanced testbench for fine-grained scoring and detailed diagnostics, plus an adaptive correctness gate with annealing to keep partially correct but PPA-promising candidates in play.

- To better capture PPA trade-offs, COEVO replaces scalar fitness with four-dimensional Pareto-based non-dominated sorting that preserves the full area/delay/power structure without manual weight tuning.

- In evaluations on VerilogEval 2.0 and RTLLM 2.0, COEVO reports 97.5% and 94.5% Pass@1 using GPT-5.4-mini, and it achieves the best PPA on 43 of 49 synthesizable RTL designs across multiple LLM backbones.

Related Articles

Small NSFW model for chatbot

Reddit r/LocalLLaMA

ChatGPT for Nurses: Prompts That Help You Document, Communicate, and Study

Dev.to

I Added a Stopwatch to My AI in 1 LOC Using the Livingrimoire While Corporations Need a Year

Dev.to

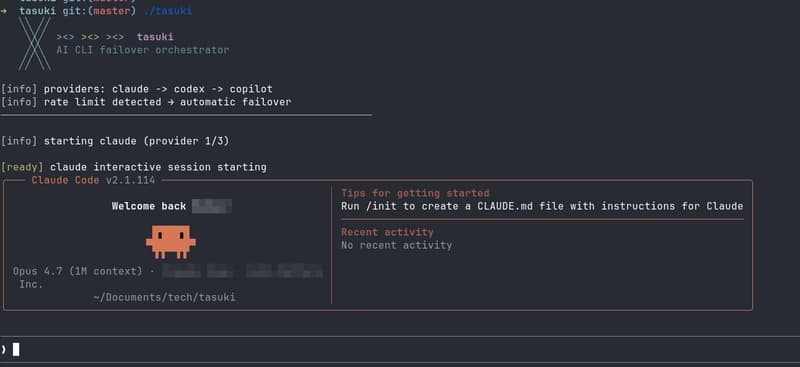

Built tasuki — an AI CLI Orchestrator that Seamlessly Hands Off Between Tools

Dev.to

I built a GNOME extension for Codex with local/remote history, live filters, Markdown export, and a read-only MCP server

Reddit r/artificial