CWoMP: Morpheme Representation Learning for Interlinear Glossing

arXiv cs.CL / 3/20/2026

📰 NewsTools & Practical UsageModels & Research

Key Points

- CWoMP introduces a morpheme-centric pretraining framework that treats morphemes as atomic units and learns their representations, aligning words-in-context with their morphemes in a shared embedding space.

- The approach uses a contrastively trained encoder and an autoregressive decoder that retrieves morpheme sequences from a mutable lexicon, producing predictions grounded in lexicon entries for interpretability.

- A key novelty is that users can expand the lexicon at inference time to improve results without retraining, enabling interactive, incremental improvements.

- Evaluations on diverse extremely low-resource languages show CWoMP outperforms existing methods and achieves higher efficiency, with notable gains when data is scarce.

Related Articles

Interactive Web Visualization of GPT-2

Reddit r/artificial

[R] Causal self-attention as a probabilistic model over embeddings

Reddit r/MachineLearning

The 5 software development trends that actually matter in 2026 (and what they mean for your startup)

Dev.to

InVideo AI Review: Fast Finished

Dev.to

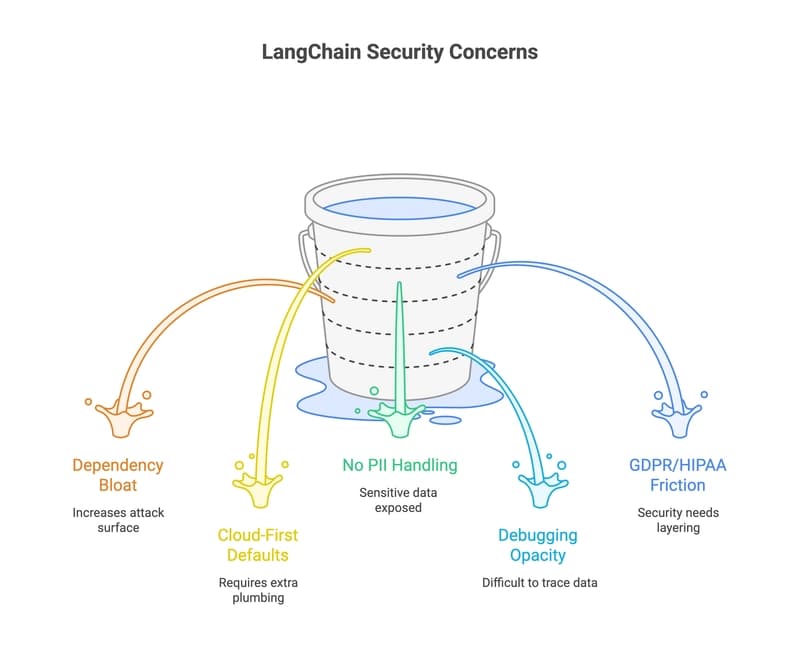

33 LangChain Alternatives That Won't Leak Your Data (2026 Guide)

Dev.to