NASimJax: GPU-Accelerated Policy Learning Framework for Penetration Testing

arXiv cs.LG / 3/23/2026

📰 NewsTools & Practical UsageModels & Research

Key Points

- NASimJax is a GPU-accelerated, JAX-based reimplementation of NASim that achieves up to 100x higher environment throughput, enabling reinforcement learning training on larger network scenarios.

- The work formulates automated penetration testing as a Contextual POMDP and introduces a network generation pipeline that yields structurally diverse and guaranteed-solvable scenarios to study zero-shot generalization.

- It introduces a two-stage action decomposition (2SAS) to handle linearly growing action spaces and shows this approach substantially outperforms flat action masking at scale.

- The paper analyzes interactions between Prioritized Level Replay and 2SAS, identifies a failure mode related to their credit-assignment dynamics, and demonstrates that NASimJax provides a fast, flexible platform for advancing RL-based penetration testing.

Related Articles

Interactive Web Visualization of GPT-2

Reddit r/artificial

[R] Causal self-attention as a probabilistic model over embeddings

Reddit r/MachineLearning

The 5 software development trends that actually matter in 2026 (and what they mean for your startup)

Dev.to

InVideo AI Review: Fast Finished

Dev.to

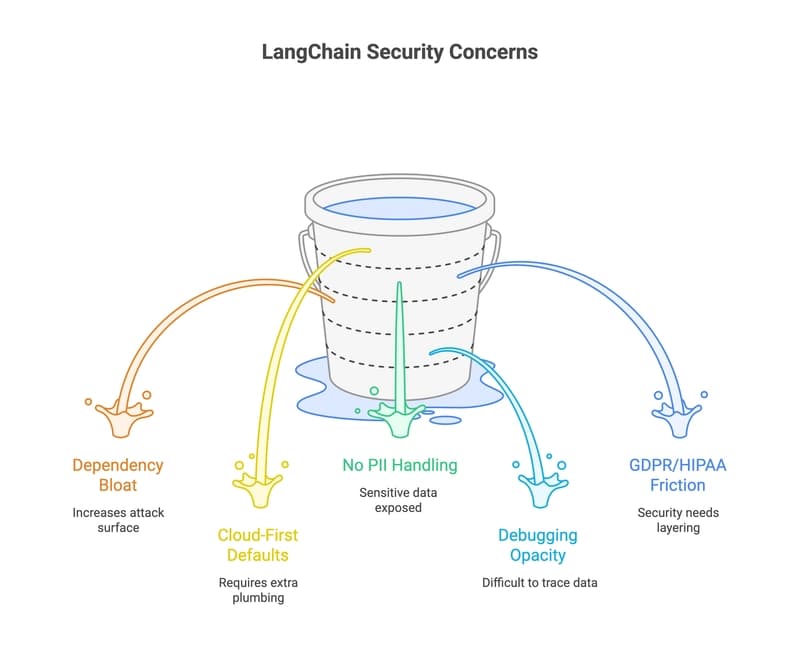

33 LangChain Alternatives That Won't Leak Your Data (2026 Guide)

Dev.to