| It is crazy that Qwen3.6 27B now matches Sonnet 4.6 on AA's Agentic Index, overtaking Gemini 3.1 Pro Preview, GPT 5.2 and 5.3 as well as MiniMax 2.7. It made gains across all three indices but the way the Coding Index works, I don't think the gains are as apparent as they should be. The Coding Index only uses Terminal Bench Hard and SciCode which are both strange choices. Cleary the training on the 3.6 models out now has focused on agentic use for OpenClaw/Hermes but it's interesting how close to frontier models such a small model can get. Qwen3.6 122B might be epic. . . [link] [comments] |

Qwen 3.6 27B Makes Huge Gains in Agency on Artificial Analysis - Ties with Sonnet 4.6

Reddit r/LocalLLaMA / 4/24/2026

💬 OpinionSignals & Early TrendsModels & Research

Key Points

- Qwen 3.6 27B reportedly matches Sonnet 4.6 on the Agentic Index in Artificial Analysis, surpassing several other strong models including Gemini 3.1 Pro Preview, GPT 5.2/5.3, and MiniMax 2.7.

- The gains are said to appear across all three indices, indicating broad improvements in agentic performance rather than a narrow benchmark win.

- The Coding Index’s methodology is questioned because it uses only Terminal Bench Hard and SciCode, which may under- or misrepresent the real coding improvements.

- The discussion suggests the latest training for Qwen 3.6 models is oriented toward agentic usage (e.g., OpenClaw/Hermes), and it raises the possibility that the larger Qwen 3.6 122B could be especially impactful.

- Overall, the post highlights how a relatively small 27B model can reach close-to-frontier performance on agentic evaluation.

- is_ai_related: true

Related Articles

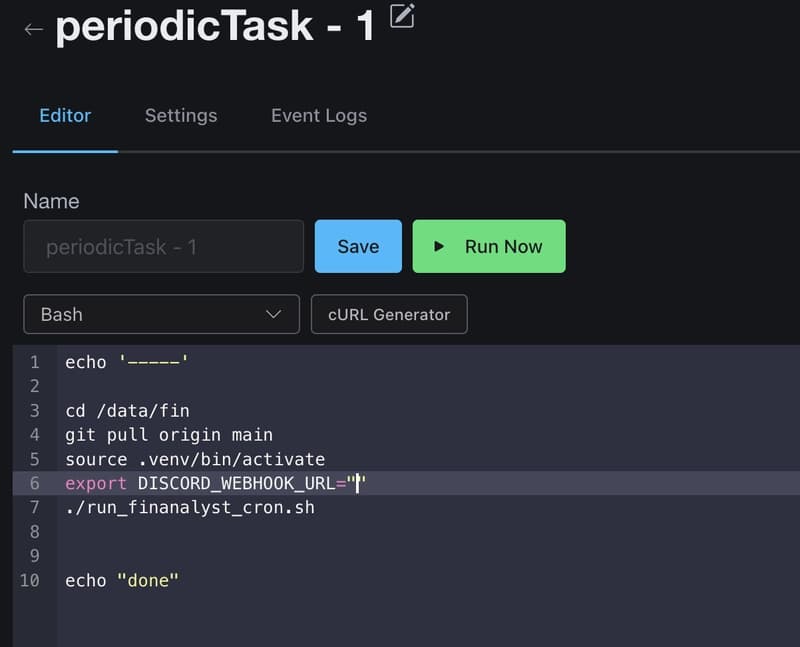

How I Use GitHub Copilot + RapidForge to Generate Daily Stock Ideas

Dev.to

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to

Anthropic CVP Run 3 — Does Claude's Safety Stack Scale Down to Haiku 4.5?

Dev.to

Design Patterns for Prompt Engineering: Toward a Formal Discipline

Dev.to

What Generative AI Reveals About the State of Software?

Reddit r/artificial