Characterizing Vision-Language-Action Models across XPUs: Constraints and Acceleration for On-Robot Deployment

arXiv cs.RO / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper analyzes how to deploy Vision-Language-Action (VLA) models on real robots by focusing on real-time inference constraints under strict cost and energy budgets rather than desktop GPUs.

- It introduces model–hardware co-characterization and a cross-accelerator leaderboard (for GPUs/XPUs/NPUs) using a CET metric (Cost, Energy, Time), finding that appropriately “right-sized” edge devices can be more efficient than flagship GPUs while still meeting control-rate requirements.

- Profiling reveals a consistent two-phase inference behavior—compute-bound vision-language (VLM) backbone followed by a memory-bound Action Expert—which can cause phase-dependent underutilization and inefficiency.

- The authors propose DP-Cache and V-AEFusion to cut diffusion redundancy and enable asynchronous pipeline parallelism, reporting up to 2.9x speedups on GPUs and 6x on edge NPUs with only marginal success degradation.

Related Articles

I Build Systems, Flip Land, and Drop Trap Music — Meet Tyler Moncrieff aka Father Dust

Dev.to

Whatsapp AI booking system in one prompt in 5 minutes

Dev.to

v0.22.1

Ollama Releases

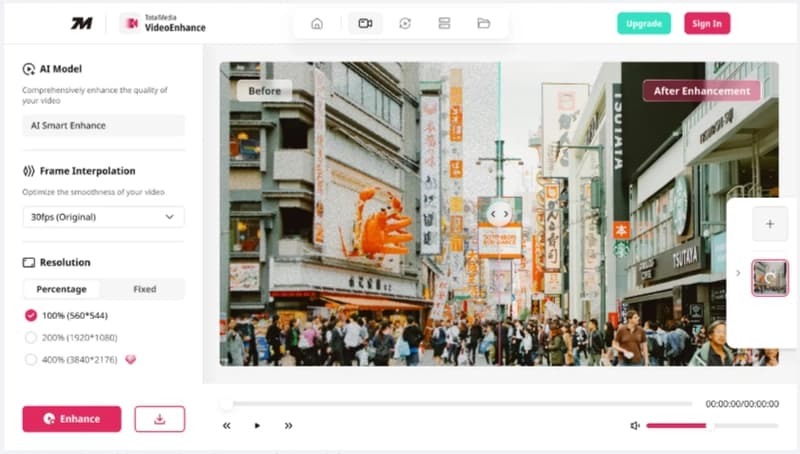

Launching TotalMedia: A Simpler Way to Fix and Convert Video Files

Dev.to

The best of Cloud Next '26: Gemini Enterprise Agent Platform. The perfect combination of Intelligence and Automation to generate VALUE.

Dev.to