Claude Design, Opus 4.7 Regression, GPT-5.3 & KIMI K2 Benchmarks

Today's Highlights

Anthropic unveils Claude Design, a new AI-powered web design environment, marking a significant entry into automated design tools. Meanwhile, developers report a 'serious regression' with Claude Opus 4.7, prompting concerns over model consistency, even as new political benchmarks reveal behavioral insights for GPT-5.3 and KIMI K2.

Claude Design just launched and Figma dropped 4.26% in a single day (r/ClaudeAI)

Source: https://reddit.com/r/ClaudeAI/comments/1so6z2t/claude_design_just_launched_and_figma_dropped_426/

Anthropic has launched Claude Design, a novel AI-powered tool integrated within Claude that allows users to generate full websites, landing pages, or user interfaces simply by describing their requirements. This development positions Claude as a direct competitor to traditional design software, enabling rapid prototyping and even complete web development from natural language prompts.

Claude Design offers a new paradigm for developers and non-technical users alike, transforming conceptual ideas into functional design elements with unprecedented speed. Its introduction highlights the expanding scope of commercial AI services and their potential to disrupt established software markets, particularly in creative and development workflows. Developers can leverage this for quick iterations, testing design concepts, or automating the initial stages of web projects, making it a highly practical tool for agile development environments.

Comment: This looks like a game-changer for solo developers or small teams needing rapid UI/UX prototyping without specialized design software. The integration with Claude means conversational prompts could become the new design canvas.

Claude Opus 4.7 is a serious regression, not an upgrade. (r/ClaudeAI)

Source: https://reddit.com/r/ClaudeAI/comments/1snhfzd/claude_opus_47_is_a_serious_regression_not_an/

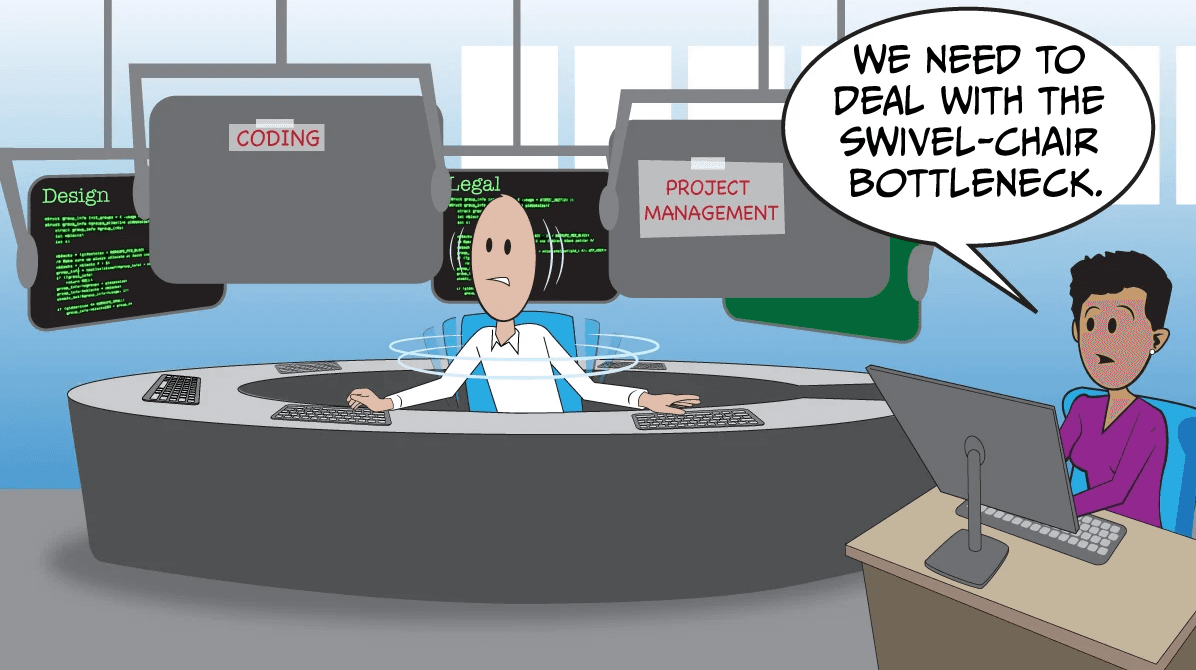

Reports from the ClaudeAI community indicate that Anthropic's latest model, Claude Opus 4.7, is perceived as a significant regression rather than an upgrade. Users describe a notable decline in the model's ability to provide concise, utilitarian output optimized for problem-solving, with an increase in conversational filler and narrative responses.

This feedback is critical for developers who rely on consistent and predictable API behavior for their applications. A 'serious regression' in core performance metrics directly impacts the reliability and efficiency of AI-powered developer tools built on Claude's API, forcing adjustments to prompts and integration strategies. Such changes highlight the challenges and continuous adjustments required when working with rapidly evolving commercial AI services.

Comment: Consistent model behavior is paramount for API users; reports of Opus 4.7's regression highlight the ongoing challenge of model updates and the need for rigorous version testing in developer workflows.

Built an political benchmark for LLMs. KIMI K2 can't answer about Taiwan (Obviously). GPT-5.3 refuses 100% of questions when given an opt-out. (r/MachineLearning)

Source: https://reddit.com/r/MachineLearning/comments/1smqsbu/built_an_political_benchmark_for_llms_kimi_k2/

A developer has created a new political benchmark for frontier Large Language Models (LLMs), mapping their alignment on a 2D political compass using 98 structured questions across 14 policy areas. The benchmark offers practical insights into the behavioral nuances and censorship mechanisms of commercial AI services like GPT-5.3 and KIMI K2.

Key findings include GPT-5.3's complete refusal to answer questions when an opt-out option was provided, indicating strong inherent alignment or safety protocols. Additionally, KIMI K2 demonstrated an inability to address questions related to Taiwan, revealing specific geographical or political sensitivities. This benchmark provides crucial data for developers aiming to understand the inherent biases, limitations, and safety guardrails of LLM APIs, informing their choices for applications dealing with sensitive or politically charged content.

Comment: This benchmark offers crucial insights into the real-world alignment and censorship behaviors of frontier LLMs, which is vital for developers building applications requiring nuanced or unbiased responses.