Built this for the AMD x lablab hackathon. One English sentence becomes a 30-second cinematic reel with characters, story, music, and per-shot voice-over. ~45 minutes end-to-end on a single AMD Instinct MI300X. Every model is Apache 2.0 or MIT.

Code: github.com/bladedevoff/studiomi300

Architecture

The Director also doubles as the Vision Critic — same Qwen3.5-35B checkpoint reloaded with a different system prompt, two roles. Saves 70 GB of VRAM.

Why a single MI300X

192 GB HBM3 lets four very different architectures share one card sequentially: 35B MoE director, 4B diffusion, 14B I2V MoE, 3.5B music, 82M TTS. On 24 GB consumer hardware this stack needs 4-5 separate machines wired together.

Models unload between phases via gc.collect() + torch.cuda.empty_cache(). The Director runs in a subprocess so its full memory frees on exit before Wan2.2 loads, otherwise OOM.

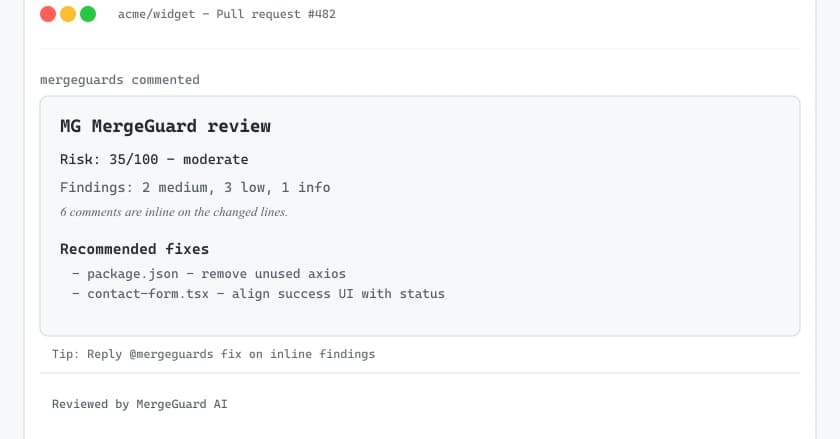

What the Vision Critic does

Most generative video pipelines render once and pray. This one re-checks every clip with a 35B vision model, scores it on four 1-10 axes, and re-renders if the overall score is below 7.

The critic returns one of 10 enumerated failure labels: CHARACTER_DRIFT, EXTRAS_INVADE_FRAME, CAMERA_IGNORED, WALKING_BACKWARDS, OBJECT_MORPHING, HAND_FINGER_ARTIFACT, WARDROBE_DRIFT, NEON_GLOW_LEAK, STYLIZED_AI_LOOK, RANDOM_INTIMACY. Each label routes to a specific retry strategy — CHARACTER_DRIFT triggers stronger reference editing, CAMERA_IGNORED simplifies motion verbs, etc.

Up to 3 attempts per shot. Wall time goes up ~30%, output quality goes up much more.

What got optimized

| Knob | Speedup | Note |

|---|---|---|

| ParaAttention FBCache (0.05) | 2.00× | lossless |

torch.compile(transformer_2) |

1.20× | selective; full-graph compile is flaky on dual-expert MoE |

| ROCm env flags | 1.10× | hipBLASLt, expandable_segments, MIOpen FAST |

| flow_shift=5 hero / 8 b-roll | quality | I used 12 first and got plastic skin |

| FLUX.2 klein 4B vs FLUX.1-schnell | ~15× | sub-second keyframes |

End-to-end: 25.9 min → 10.4 min per 720p clip on MI300X. 2.5× cumulative.

What didn't work (documented in incidents.md)

-

AITER FP8 on Wan2.2 —

gemm_a8w8_CKsegfaults on cross-attn shape (M=512, K=4096, N=5120) inside the full pipeline graph. MatchesROCm/aiter#2187. Shipped BF16. - MagCache — diffusers 0.38 calibration counter doesn't fire on Wan2.2's dual-transformer schedule.

-

torch.compile(mode="max-autotune", fullgraph=True)— Dynamo error (diffusers#12728). -

channels_last— Wan2.2 transformer is rank-5; channels_last is rank-4 only. - Wan2.2-Lightning I2V LoRA — V1 only (Aug 2025), quality drop on hero shots vs full-step.

Identity without LoRA training

The standard approach in cinematic video tools is per-character LoRA training — upload a dataset, wait ~90 minutes, get a custom identity model. This pipeline skips that step entirely.

FLUX.2 [klein]'s reference editing pins character identity by construction. One master portrait per character → conditioned reference editing on every subsequent keyframe. Identity stays consistent across shots without any fine-tuning.

Saves an hour per character. No dataset prep. No training compute. Major architectural bet that works.

Locale-aware narration

Director picks the narration language to match setting. Tokyo → Japanese. Paris → French. Mumbai → Hindi. Kokoro-82M supports 9 languages, so the locale-aware narration is "free" once the Director chose.

Per-shot WAVs align to clip start offsets via ffmpeg's adelay, mixed with the music bed at -18 LUFS.

Everything is open

Code is Apache 2.0. Every model — Qwen, FLUX.2, Wan2.2, ACE-Step, Kokoro — Apache 2.0 or MIT. Outputs commercially usable.

GitHub: github.com/bladedevoff/studiomi300

HF Space: huggingface.co/spaces/lablab-ai-amd-developer-hackathon/studiomi300

Fork it. The vision critic's failure taxonomy is opinionated — yours might differ. The Director's prompt could be tuned for documentary vs narrative. ACE-Step's brief could include diegetic vs non-diegetic flags.

This isn't a finished product. It's a working baseline for autonomous open-weights cinematic generation on a single GPU, ready to extend.

Built solo for the AMD Developer Hackathon (May 2026). Thanks to the FLUX, Wan2.2, ACE-Step, and Kokoro teams for keeping serious generative AI open.