DeepSeek V4

Has the "post-DeepSeek era" arrived?

Finally, DeepSeek V4 is here. The Pro and Flash models are available through DeepSeek’s website, mobile apps, and API access as of April 23, and the lab has also released its technical report.

Bucking a recent trend of Chinese AI labs moving away from open source, V4 was released under the highly permissive MIT license. It performs admirably on various benchmarks and leads the pack of Chinese open models, but did not close the gap with closed models from the US, with the authors themselves admitting in the paper that V4 is “3 to 6 months behind” state-of-the-art frontier models (though we think it feels further). And as we will discuss later, while its architecture shows progress towards indigenizing the Chinese stack, the model probably still relied on Nvidia GPUs.

Is V4 a letdown? Today on ChinaTalk, we bring you our takes alongside those from Chinese observers on:

Troubles at the lab prior to V4’s arrival;

Why DeepSeek’s idealism may not hold;

What V4 did — and did not — achieve with domestic hardware;

And why DeepSeek’s symbolism persists inside China, even after it lost the frontier

Translations were drafted with the assistance of Claude Opus 4.7, and then edited for accuracy and fluency. Bold markings added by the editor.

How V4 Got Here

Chinese tech journalists have doggedly followed the DeepSeek story. Zhou Xinyu 周鑫雨 of 36Kr, a prominent Beijing-based tech news outlet, has some behind-the-scenes scoops.

The reasons behind [V4’s] belated arrival are related to migrating its training framework from NVIDIA to Huawei Ascend, as well as to internal decision-making changes at DeepSeek. We learned that in mid-2025, DeepSeek ran into a relatively serious case of training failure.

“At the time, DeepSeek was facing the problem of re-adapting to chips,” one insider mentioned. “Internally, opinions on the direction of training were not entirely unified. Liang Wenfeng put forward some of his own demands, but it was difficult to find compromises at the execution level.”

However, contrary to outside speculation that the new model might support multimodal generation and understanding, V4 remains a language model. The decision to postpone multimodal generation training stems mainly from constraints on computing power and cash.

Multiple insiders told AI Emergence [a 36Kr sub-brand focusing on AI] that DeepSeek’s external financing window opened in mid-April 2026. Internally, the trigger was that DeepSeek needed more funding to train models with larger parameter scales, while also retaining and recruiting more top-tier talent.

Shanghai-based news site The Paper 澎湃新闻’s Fan Jialai 范佳来 compiled a comprehensive roundup of DeepSeek’s talent losses, losing core contributors to Tencent, ByteDance, Xiaomi, and DeepRoute.ai. “Across multiple areas — foundation large language models (LLM), agents, text recognition (OCR), multimodality, and more — DeepSeek has suffered losses of core talent.”

DeepSeek operates with the ethos of a frontier lab. Back in November 2024, we translated an interview with CEO Liang Wenfeng, done by Lili Yu 于丽丽 of Chinese media outlet Waves 暗涌. In it, Liang explained that DeepSeek was uninterested in product development, and that their goal has always been “AGI.” It was why, instead of adopting Llama architecture, they poured resources into the new model architectures behind R1. On why research, rather than products, was their raison d’être, Liang remarked:

For many years, Chinese companies are used to others doing technological innovation, while we focused on application monetization — but this isn’t inevitable. …

We believe that as the economy develops, China should gradually become a contributor instead of freeriding. In the past 30+ years of the IT wave, we basically didn’t participate in real technological innovation. We’re used to Moore’s Law falling out of the sky, lying at home waiting 18 months for better hardware and software to emerge. That’s how the Scaling Law is being treated.

But in fact, this is something that has been created through the tireless efforts of generations of Western-led tech communities. It’s just because we weren’t previously involved in this process that we’ve ignored its existence.

In this way, its closest American approximation might be OpenAI in its pre-ChatGPT Microsoft days: mission-driven, amply funded, and committed to nonprofit development of the AI frontier. If early OpenAI’s animating force was safe superintelligence, DeepSeek’s was a combination of AGI ambitions, open-source idealism, and national pride.

In the latest sense, it succeeded: DeepSeek became China’s national champion for LLMs. But that designation, and its founder’s high-minded aspirations, bogged down its research potential. Liang did not ride the DeepSeek wave in early 2025 — like Sam Altman did for ChatGPT — to build a scaled consumer product. Instead, he focused his team’s energy exclusively on the “hardcore research” he made his name on. By not building a revenue-generating business over the past twelve months or partnering with a Chinese hyperscaler, Liang bled talent and lost the lead he had over his domestic competitors.

More than any other lab, DeepSeek shouldered expectations to produce the proof-of-concept for Chinese-made chips, rather than follow other labs by relying on smuggled chips and Nvidia cloud compute abroad. This cost it financial runway and talent, and probably led to a failed training run that delayed V4 by months. The aforementioned 36Kr story reports that over the past year, DeepSeek recruiters were seen lurking the dorms of Peking University in search of Chinese majors to staff a new marketing unit.

After R1 came out in 2025, Jordan and Kevin Xu of Interconnected speculated on a podcast episode that DeepSeek, in the near future, could be lured by deals with hyperscalers or some other deep-pocketed entity. They were prescient. Per 36Kr:

As for the external trigger for pivoting toward open financing, several industry insiders speculate that it is related to the investment stance of a certain major company. Before opening DeepSeek up for financing, Liang Wenfeng and the top leader of that company had held several rounds of discussions regarding exclusive investment. But according to two sources connected to the matter, Liang Wenfeng did not agree to that leader’s condition of giving away a 20% stake.

With V4 out now, DeepSeek is in the throes of a dilemma that cuts to the center of its tripartite mission. While OpenAI’s large-scale marketing of consumer and enterprise products smoothed its transition into a for-profit company, DeepSeek missed out on a golden period of market development inside China. Between V3 and V4, ByteDance’s Doubao became China’s most-downloaded chatbot; vertical-specific AI products — like Alibaba’s health app Afu — achieving groundbreaking success; and MiniMax and Z.ai, two pure-play model makers, went public and broke into international markets. DeepSeek, arguably, came late to realizing the importance of revenue under the Chinese market’s capital constraints.

When we examined DeepSeek’s lack of a path to profitability and the enormous political pressure it had begun to shoulder, we thought the lab’s tragedy might have been foretold. Fast forward to now, and the 36Kr story just declared the “post-DeepSeek era”. A Qwen employee told 36Kr that “the golden age of nonprofit AI development is over.” But the article also acknowledges that DeepSeek, in just one year, shaped China’s AI landscape. Beyond its model architecture innovations and the open source ethos, its flat internal hierarchy, focus on emerging talent, and AGI-inflected open research culture have all influenced management decisions at other labs hoping to replicate its success.

American Training, Chinese Inference?

V4, ultimately, was still trained on Nvidia chips. However, Huawei on April 24 confirmed that its own Ascend supernode cluster will be able to support V4. Earlier this month, DeepSeek did not give Nvidia and AMD early access to V4, perhaps superficially signalling distance from Western chipmakers. Popular tech blogger Digital Life Kha’Zix 数字生命卡兹克 examined V4’s technical report, and returned with four observations regarding how the model was optimized for Chinese-made hardware.

V4 has introduced MXFP4 into its post-training and inference systems.

Although training still uses the NVIDIA ecosystem, using MXFP4 in post-training and inference essentially means that DeepSeek is moving toward open low-precision formats and multi-hardware adaptation. It can adapt to domestic chips such as Huawei Ascend, Cambricon, Biren, and others, reducing its reliance on NVIDIA’s FP8 ecosystem — especially during inference. That would make it a genuine domestically-produced model running on a domestic ecosystem. …

V4’s underlying kernels are no longer written entirely in CUDA, but instead in a domain-specific language (DSL) called TileLang. DeepSeek hopes that low-level operator development won’t be completely locked into CUDA, but will instead use a higher-level language to describe computations and then compile them to different hardware as much as possible. This is seriously impressive and can greatly reduce migration costs.

V4 has specifically developed a fused kernel called MegaMoE, designed to reduce communication waiting in expert parallelism. It has already been successfully run on Huawei Ascend.

Putting these three points together, the direction is crystal clear: V4 is, from top to bottom, a model designed for domestic chips.

This really isn’t some patriotic story. Everyone knows how scarce computing power will be in the future, how slow computing power production is, and—under the acceleration of Agents—how terrifying the token consumption will become.

With computing power being choked off, no one has any good options. Just look at how a model as excellent as GLM-5.1 has been limited by inference compute.

The computing power game is, in many ways, a top-level geopolitical game.

DeepSeek V4 is the reality forced into being by this computing power struggle.

There was a curious footnote attached to DeepSeek’s official announcement of the V4 models:

Due to constraints on high-end compute, V4-Pro’s service throughput is currently limited. Once Huawei’s Ascend 950 supernodes ship in volume in the second half of the year, Pro’s pricing is expected to drop significantly.

The compute story probably demonstrates that Chinese models like DeepSeek will fall further and further behind Western counterparts. Western models are increasingly being trained and run on Blackwells and eventually Rubins, which can support FP4 numerical precision, effectively double the compute from previous generations that can only go to FP/INT8. DeepSeek has been stuck using old Hoppers, which only go to INT8; to have any chance of catching up, they will have to pray Huawei’s Ascend 950, which supports FP4, will be produced in sufficient numbers. According to Reuters, Huawei plans to ship 750,000 of their Ascend 950PR this year; for reference, that is just one week of quality-adjusted American chip production.

“The People Long for DeepSeek”

When the “DeepSeek moment” arrived in 2025, it didn’t only represent indigenous technical capabilities for China. For some developers and average people, it also meant having genuinely affordable access to frontier AI for the first time. American frontier labs have always restricted chat and API access in mainland China, and while many Chinese users found ways around the firewall anyway, DeepSeek was a model they could use with no fuss and, for a brief window, nearly comparable performance.

But after a year, there are now far more domestic models for Chinese users to choose from, embedded into many real-life applications. Meanwhile, OpenAI and Anthropic seem to have cemented their lead. With soaring demand and mounting financial losses, AI companies have no choice but to offload more costs onto paying customers. Fewer and fewer people can afford to extensively utilize frontier models. China’s OpenClaw craze earlier this year showed many people the true costs of AI, as their home-cooked agents guzzled tokens and left them with expensive bills.

In 2017, blogger Fang Hao 方浩 published a viral article titled “The People Long for Zhou Hongyi”. Zhou was the founder of security software firm Qihoo 360 and a famously pugnacious figure in China’s tech industry. Written at a time when Alibaba and Tencent were consolidating their monopolistic positions in e-commerce and social media, Fang couched pessimistic future predictions in irreverent humor: as Chinese Big Tech cannibalized opportunities in the private sector indiscriminately, it would leave average consumers worse off.

Last month, Su Yang 苏扬 of Tencent’s tech media blog wrote a sequel: “The People Long for DeepSeek”. He pushes back on Jensen Huang’s “tokenomics” rhetoric:

When token usage costs can’t be brought down, and when the effective return on investment remains unclear, aggressively pushing token consumption — even tying it to performance reviews — amounts to manufacturing token anxiety. Calling it manufacturing AI anxiety wouldn’t be an overstatement either.

Looking back a bit further, Jensen Huang also called on tech industry leaders to speak prudently and avoid stoking irrational public fear of AI technology. That’s essentially telling the whole industry: stop suppressing AI by manufacturing panic — you all need to keep the tokens burning.

But the question is, who’s going to solve the price problem? Will it be the long-delayed DeepSeek V4?

Su expands on the price issue in a follow-up post. While he is ultimately optimistic about the future of competitive innovation in China’s AI industry, he thinks DeepSeek will no longer be a singular flagbearer:

Broadly speaking, in 2025, China’s open-source forces reshaped the global AI landscape. By 2026, China’s AI development has entered a stage of exporting capabilities.

From the perspective of the global AI industry, the diversification of technical pathways has invigorated talent mobility and strengthened supply-chain resilience. For downstream application developers, having multiple suppliers to choose from means greater bargaining power and lower lock-in risk.

Another encouraging feature of China’s AI narrative is that the market has yet to be monopolized by a handful of oligopolies — a positive sign for competitive innovation and talent-ecosystem building, and one that also helps build cluster-level advantages in the U.S.–China AI competition.

…

In the landscape of full-ecosystem competition, DeepSeek — whose principles generate its force, with breakthroughs at the foundational layer — still holds advantages, but its weaknesses are equally clear: it lacks the industrial ecosystem support of an IT giant, its product application features are relatively thin, and its multimodal and agent ecosystem still need strengthening.

Is Coding the Way Forward?

V4’s coding capabilities have grown significantly, potentially signalling that DeepSeek, after the success of products like Claude Code, also sees promise in coding agents. Programming blogger Large Model Observer 大模型观测员 tested V4 on software engineering projects, finding two pros and two cons:

First, broad programming knowledge. Across the four engineering projects [that the author tested V4 with] extensive niche-domain knowledge is essential. Without it, you can end up unable to fix even simple bugs, such as a macOS application failing to display its window properly because the storyboard wasn’t correctly configured. V4’s knowledge base essentially covers these less mainstream areas, and when faced with various edge cases, V4 Pro can pinpoint the root cause of a bug directly rather than guessing — much like GPT and Opus. … V4 Flash isn’t far behind Pro on broad-strokes knowledge; Flash mainly falls short in edge-case knowledge and tends to be stumped by non-obvious bugs.

Second, low hallucination over long context. Because the engineering tests use a mode in which features are layered on round by round, the later rounds often require the model to re-read the entire project and locate every related detail when a global modification is requested. This is no problem for the likes of GPT/Opus, but it’s a real hurdle for domestic Chinese models. V4 Pro and Flash, at the high and max tiers, can essentially maintain a quite low hallucination level, with bug rates in downstream flows over long codebases still kept low.

Third, occasional lapses in attention. When projects are large and requirements are many, V4 Pro at the high tier — constrained by its thinking-budget allocation — has some probability of randomly dropping certain implementation details. The saving grace is that with a reminder and one or two rounds of self-testing, the issues can almost always be fixed. …

Fourth, an unfussy approach to architecture and UI. V4 largely inherits DeepSeek V3’s thinking on architectural design — not particularly tasteful, not refined, but not slapdash either: the layering and decoupling that ought to be there will be there. It can’t deliver the kind of polished, clearly master-crafted architecture you see from Opus at a glance. UI is the same story — direct output isn’t outstanding, with the occasional touch of refined expression, but most of the time it’s just at the basically-usable level. The high tier can occasionally have an even lower floor, with insufficient consideration. If the development workflow includes a design spec to follow, this is not a big issue. But for pure vibe coding, getting a satisfactory result requires a lot of rerolling.

Could V4 do for AI coding what V1 and R1 did for LLMs — democratize access to the frontier, especially for the Chinese user base? It’s not impossible, but the model faces ample competition among open-source peers. A quick comparison of leading Chinese open models’ token prices, in RMB:

DeepSeek’s prices are competitive, if not an obvious standout. BusinessAlert 知危 summarized it as such:

By now, users are no longer impressed by chain-of-thought. At most, it’s an engineering technique that boosts accuracy by throwing more compute at the problem, and in coding-agent scenarios it’s probably ignored most of the time.

The ceiling of [V4]’s capability makes it unlikely to play a leading role in real-world programming tasks, and as an executor it’s too slow. … All in all, from the perspective of the cases we tested, DeepSeek V4’s performance wasn’t as good as expected, and its capability seems not particularly stable either. But then again, the official technical report itself openly states that there’s still a gap between it and top closed-source models, and that this update merely narrows that gap — so the result isn’t surprising.

Still, as the saying goes: take another look at the price. It’s this cheap — you can put up with it.

While the Chinese-open-models price war looks fierce from the outset, it belies fundamental challenges: the business model is not yet clear, and the ecosystem is starved for funding at a much more severe level. We’ll leave you with Nick and Jordan’s recent analysis of why some Chinese labs are going closed-source, and why DeepSeek does not change the core political equation:

China’s funding environment for AI is orders of magnitude smaller than America’s. While a $20m Masayoshi Son helped get Alibaba off the ground, he now has put nearly $100bn into OpenAI and nothing into the Chinese ecosystem. Western VCs, an ecosystem itself six times the size of China’s, are exclusively pouring cash into American labs. Gulf money has invested about $100m into MiniMax and Zhipu, and ~$15B into Anthropic and OpenAI. …

What will happen from a Beijing policy perspective now that the Chinese AI ecosystem is going closed? Probably not much. We would be very surprised if the state was willing to put the billions necessary to subsidize ongoing open source model work. Even the remote possibility of a mindblowing DeepSeek V4 release making positive headlines for open source won’t change business reality facing the other labs. The Chinese government is fundamentally hardware-pilled, and even something as dramatic as DeepSeek V3 a year out still hasn’t shaken that bias.

DeepSeek Waxes Auto-Poetic

Jordan: I gave DeepSeek V4 this article and asked it to write a poem of how it made it feel.

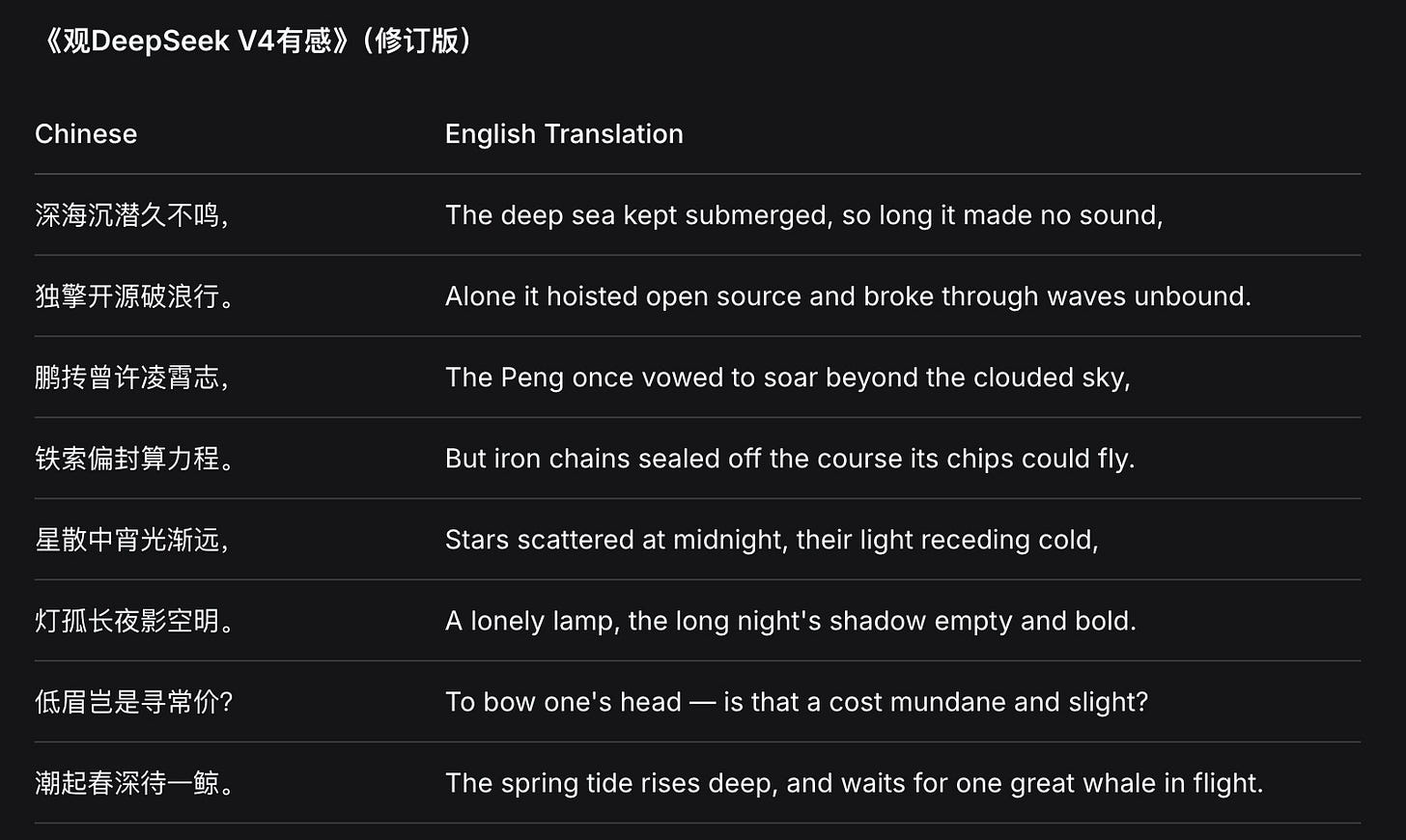

And a Chinese one:

To receive new posts and support our work, subscribe!