Learning from Demonstration with Failure Awareness for Safe Robot Navigation

arXiv cs.RO / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper tackles a safety gap in learning-from-demonstration robot navigation, where training data mainly cover successful behaviors and provide little information about unsafe states.

- It argues that failure experiences (e.g., collisions) are informative about hazardous regions, but naïvely adding them to imitation/policy learning can worsen performance.

- The authors propose a failure-aware learning framework that separates how success and failure data are used: failure data inform value estimation in dangerous regions, while policy learning uses only successful demonstrations.

- Experiments in offline reinforcement learning settings, both in simulation and on real robots, show reduced collision rates without sacrificing task success and improved generalization across environments and robot platforms.

Related Articles

I Build Systems, Flip Land, and Drop Trap Music — Meet Tyler Moncrieff aka Father Dust

Dev.to

Whatsapp AI booking system in one prompt in 5 minutes

Dev.to

v0.22.1

Ollama Releases

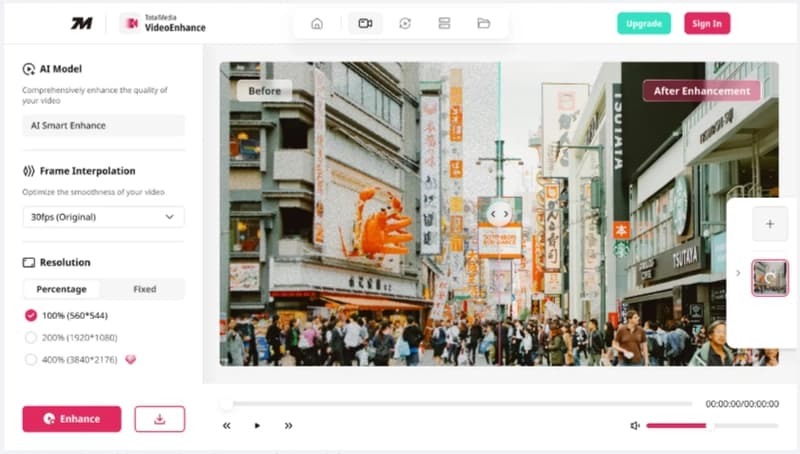

Launching TotalMedia: A Simpler Way to Fix and Convert Video Files

Dev.to

The best of Cloud Next '26: Gemini Enterprise Agent Platform. The perfect combination of Intelligence and Automation to generate VALUE.

Dev.to