Multimodal Fused Learning for Solving the Generalized Traveling Salesman Problem in Robotic Task Planning

arXiv cs.RO / 3/23/2026

💬 OpinionTools & Practical UsageModels & Research

Key Points

- The MMFL framework blends graph-based and image-based representations to tackle GTSP in robotic task planning.

- It introduces a coordinate-based image builder that converts GTSP instances into spatially informative representations and an adaptive resolution scaling strategy for different problem sizes.

- The architecture includes a multimodal fusion module with dedicated bottlenecks to effectively integrate geometric and spatial features for real-time planning.

- Experimental results show MMFL significantly outperforms state-of-the-art methods on various GTSP instances, with physical robot tests confirming real-world applicability and efficiency.

Related Articles

Interactive Web Visualization of GPT-2

Reddit r/artificial

[R] Causal self-attention as a probabilistic model over embeddings

Reddit r/MachineLearning

The 5 software development trends that actually matter in 2026 (and what they mean for your startup)

Dev.to

InVideo AI Review: Fast Finished

Dev.to

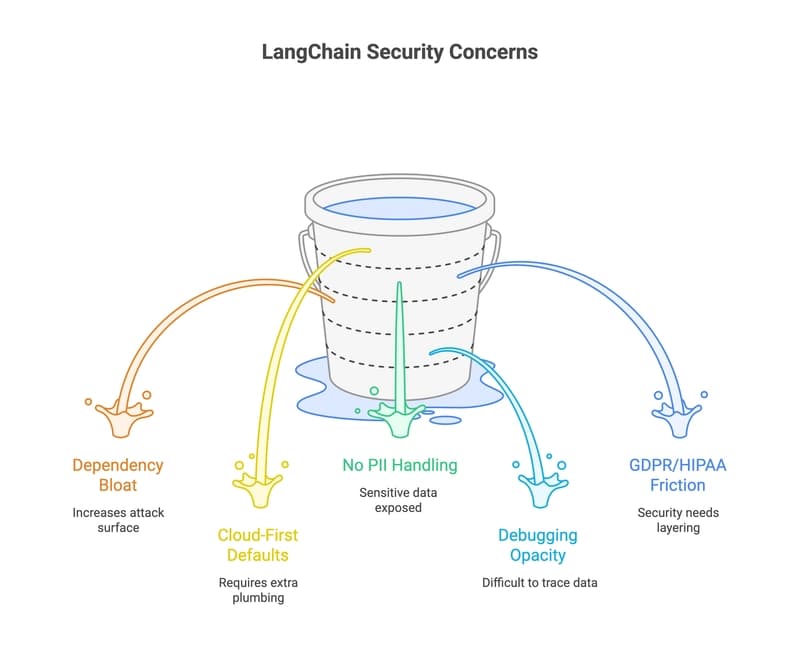

33 LangChain Alternatives That Won't Leak Your Data (2026 Guide)

Dev.to