![Formalizing statistical learning theory in Lean 4 [R]](https://external-preview.redd.it/vcCPMFQJ_Ba1RGnmGbl8xPO9fb1vZ01Ht3Hg4xF7i0Q.png?width=640&crop=smart&auto=webp&s=66c23bc95d8b7a4cf205d906d8b6fed9fda955ab) | I’ve been working on a Lean 4 project focused on formalizing parts of statistical learning theory: Current results include:

The main idea is to build a readable and pedagogically structured “theorem ladder” for ML theory rather than just isolated declarations. I’m trying to keep:

Compared to some existing Lean SLT efforts that focus more heavily on empirical-process infrastructure and abstract probability machinery, this project is currently more focused on explicit finite-sample PAC/Rademacher/stability routes and readable end-to-end theorem chains. I’d especially appreciate feedback on:

Thank you, [link] [comments] |

Formalizing statistical learning theory in Lean 4 [R]

Reddit r/MachineLearning / 5/9/2026

💬 OpinionDeveloper Stack & InfrastructureIdeas & Deep AnalysisModels & Research

Key Points

- The project FormalSLT is working to formalize key parts of statistical learning theory in Lean 4, focusing on rigorous, readable theorem development.

- Current formalized results cover multiple standard learning-theory tools and bounds, including finite-class ERM bounds, Rademacher symmetrization, high-probability Rademacher bounds, VC-dimension connections (Sauer–Shelah), scalar contraction, linear predictor bounds, finite PAC-Bayes bounds, and algorithmic stability.

- The author’s main design goal is to create a “theorem ladder” with explicit assumptions, scoped theorem statements, and no use of Lean’s placeholder proofs (no `sorry`).

- Compared with other Lean SLT efforts that emphasize abstract probability and empirical-process infrastructure, this work prioritizes explicit finite-sample PAC/Rademacher/stability proof routes with end-to-end theorem chains aligned to standard SLT presentations.

- The author is seeking feedback on theorem organization, proof structure, naming/API decisions, and suggestions for useful next targets to formalize.

Related Articles

Helping ChatGPT better recognize context in sensitive conversations

Dev.to

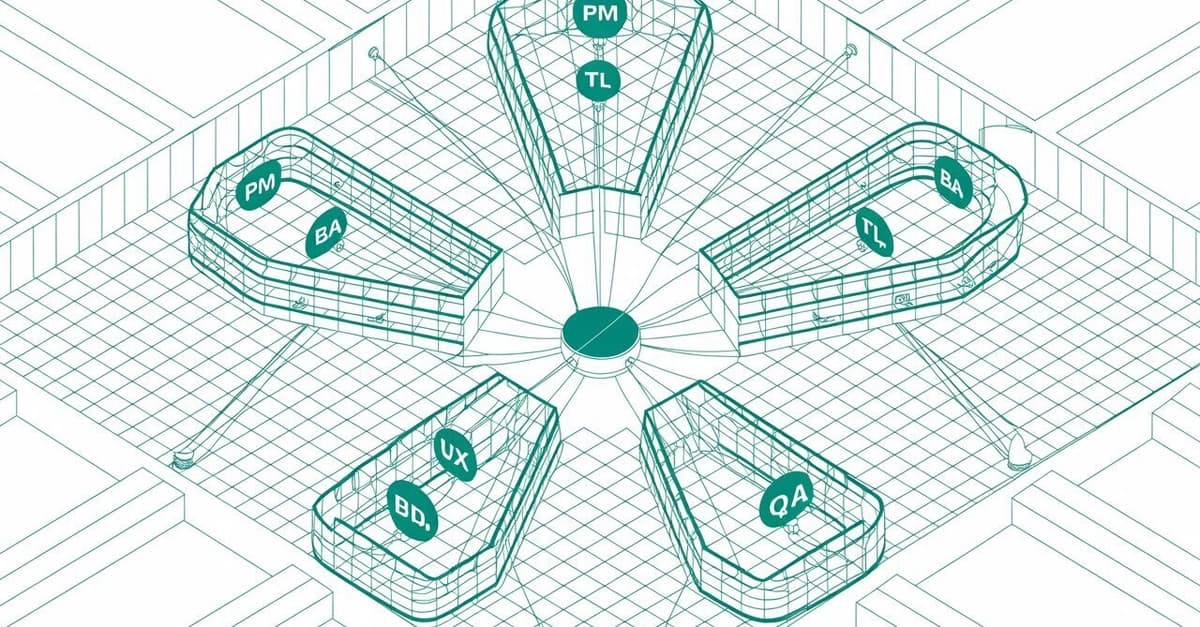

I Built a Local AI Team to Stop My Side Projects From Dying

Dev.to

The Code You Shipped Yesterday Won't Scale Tomorrow, Here's Why

Dev.to

**📈 I just launched NeuroArchAI Platform – and it's completely FREE on GitHub right now.**

Dev.to

From One-on-One to One-to-Many: Scaling Your Coaching with AI

Dev.to