From Instruction to Event: Sound-Triggered Mobile Manipulation

arXiv cs.RO / 4/16/2026

💬 OpinionSignals & Early TrendsIdeas & Deep AnalysisModels & Research

Key Points

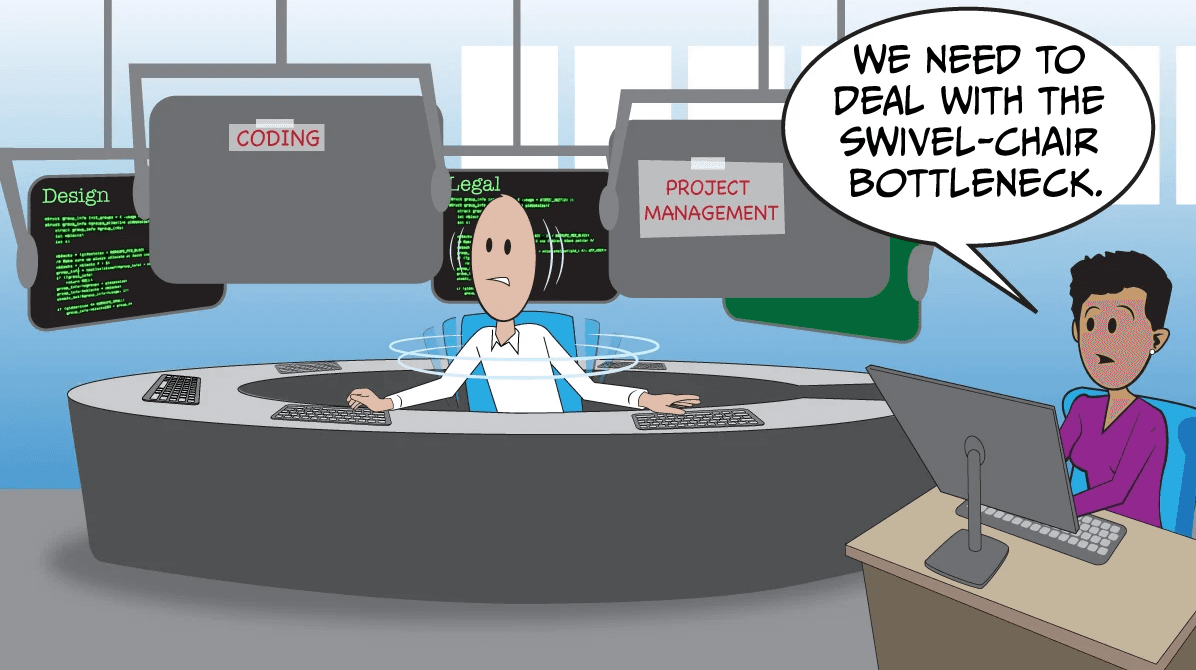

- The paper argues that mobile manipulation research has been too focused on an instruction-driven paradigm, which restricts agents from reacting autonomously to dynamic events in the environment.

- It introduces a new task setting called sound-triggered mobile manipulation, requiring agents to perceive and interact with sound-emitting objects without explicit action-by-action instructions.

- To enable this, the authors develop Habitat-Echo, a data platform that combines acoustic rendering with physically grounded interaction in a simulated environment.

- The work proposes a baseline system with a high-level task planner and low-level policy models, designed to detect auditory events and decide appropriate interactions.

- Experiments—including a dual-source setup with overlapping acoustic interference—show the agent can identify the primary sound source, interact with it first, and then proceed to manipulate a secondary object, demonstrating robustness.

Related Articles

Meta Pivots From Open Weights, Big Pharma Bets On AI, Regulatory Patchwork, Simulating Human Cohorts

The Batch

Introducing Claude Design by Anthropic LabsToday, we’re launching Claude Design, a new Anthropic Labs product that lets you collaborate with Claude to create polished visual work like designs, prototypes, slides, one-pagers, and more.

Anthropic News

Why Claude Ignores Your Instructions (And How to Fix It With CLAUDE.md)

Dev.to

Latent Multi-task Architecture Learning

Dev.to

Generative Simulation Benchmarking for circular manufacturing supply chains with zero-trust governance guarantees

Dev.to