ELAS: Efficient Pre-Training of Low-Rank Large Language Models via 2:4 Activation Sparsity

arXiv cs.LG / 5/6/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper introduces ELAS, a framework for efficiently pre-training low-rank LLMs by applying 2:4 structured sparsity to activations rather than leaving activation matrices full-rank.

- ELAS modifies low-rank feed-forward networks using squared ReLU, then applies NVIDIA-friendly 2:4 structured sparse formatting to activations after that operation.

- Experiments on LLaMA models (60M to 1B parameters) show ELAS preserves model performance with minimal degradation compared with non-sparse baselines.

- The method provides training and inference speedups and reduces activation memory overhead, with the benefits becoming especially pronounced for large batch sizes.

- The authors state the code is publicly available via the ELAS Repo, enabling replication and further experimentation.

Related Articles

Helping ChatGPT better recognize context in sensitive conversations

Dev.to

The Code You Shipped Yesterday Won't Scale Tomorrow, Here's Why

Dev.to

**📈 I just launched NeuroArchAI Platform – and it's completely FREE on GitHub right now.**

Dev.to

From One-on-One to One-to-Many: Scaling Your Coaching with AI

Dev.to

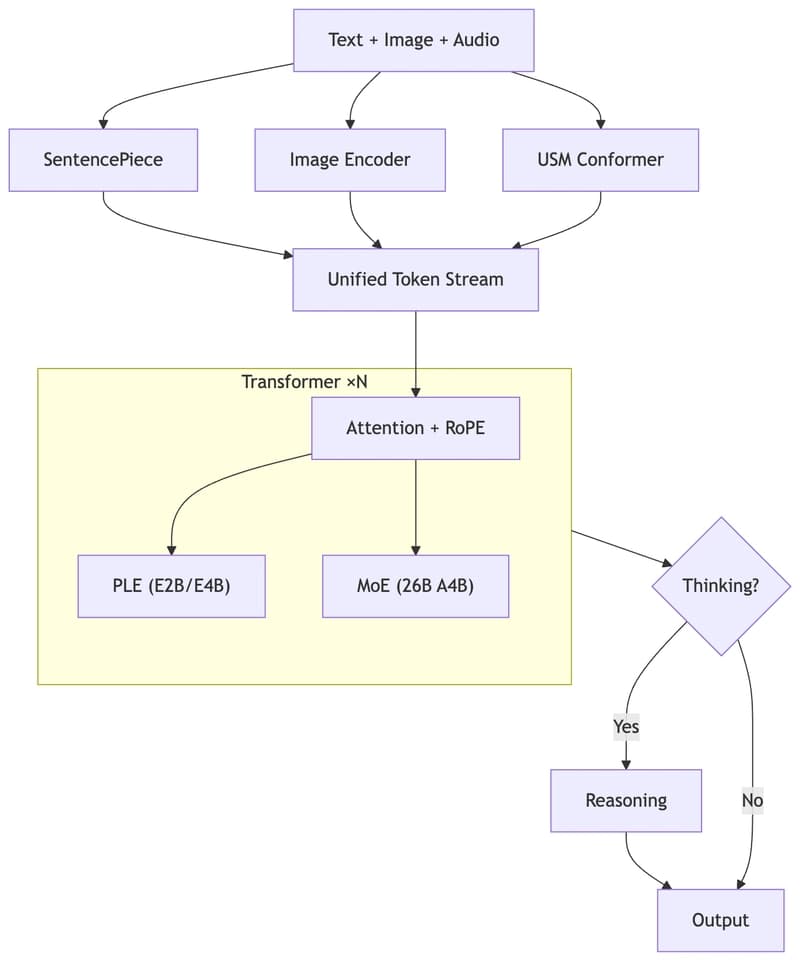

Run Gemma 4 on Your Laptop — A Hands-On Guide to Google's Latest Open Multimodal LLM

Dev.to