| TL:DR - Remembered FPGA PCI boards being a big thing from my crypto days. Wondered if AMD Alveo V80 FPGA card could be used to approximate the performance of a Taalas HC1 (LLM-on-a-chip). Ran the idea past Gemini Pro for a feasibility / sanity check. It suggested what seemed to be a speculative decoding type of setup on the FPGA and said I might could get to 3,200 tk/s with a Q4 of Qwen3.5 4b or maybe 1;400 tk/s with 9b. Not Taalas HC1 speeds, but still pretty fast (potentially). Posting here to see if anyone has already tried anything like this. AMD V80 FPGAs cost like $9500 USD btw. So I saw that company Taalas was burning the weights of Llama 3.1 8b to a chip and getting a ridiculous 15,000 tk/s. I don’t know when they are going to actually release this hardware, but it got me thinking. Obviously when you burn the model weights to the chip, that’s it, you’re going to be stuck using those weights forever, but If it’s a good model then, it’s still pretty awesome! Back in my crypto mining days. I had a BitMain L3+ ASIC miner which had the Script Algo essentially burnt to a bunch of chips so it could nine script algo really fast. You could also script mine with a GPU of course but it was suprt slow compared to a ASIC miner, for the same reason a Taalas chip would beat a GPU for serving a hard coded model. People got tired of ASIC miners because they were only good at one Algo and when a more efficient one came out, the one you bought 6 months ago was now a useless paper weight. Then came Field Programmable Gate Arrays (FPGAs), they were supposedly going to be the best of both worlds. They were going to have better speed than mining with a GPU, but reprogrammable so that you weren’t stuck with one Algo that had been burnt onto the chips like with ASIC. So essentially it was effectively a reprogrammable ASIC. This was about the time when ETH went to proof-of-stake and all crypto mining kind of took a nose dive and died off. But I was probably months away from buying a Xilimix Alveo U55C (16GB HBM) PCIe FPGA. I didn’t end up buying it, but kinda wish I had now for the following reason: When I heard about the Taalas LLM on a chip getting insane 15,000 t/s, I thought why doesn’t someone just do the same thing with an FPGA PCI card. Could you use an FPGA card like the Xilinix U55C and kind of do what Taalas did but burn the weights to the FPGA HBM so you get a similar speed boost as if the weights were burnt on a chip but you get the advantages of the reprogramability of an FPGA? I ran this idea by Gemini Pro to get a sanity check. The Alveo U55C was too limited in its memory, but the AMD Alveo V80 (I guess AMD bought out Xillinx) has some intriguing specs that Gemini seemed to think might actually have a small chance in being used in a way that could accelerate inference beyond current consumer GPU speeds (not on par with Tallas, but faster than GPU) Here is the direct response from Gemini: Question: Thought experiment: how would you best use the Alveo V80 to accelerate inference to a level close to the Taalas? Do your best to come up with something new that would accelerate inference. Give me the overview of what you would do (not the code). This is exactly the kind of hardware puzzle I love diving into. To get an Alveo V80 to chase the Taalas HC1, we have to stop treating the V80 like a GPU and start exploiting its true nature: spatial computation and programmable memory controllers. Since we can't eliminate the High-Bandwidth Memory (HBM) wall entirely like Taalas does, our thought experiment needs to focus on effectively making the HBM invisible. Here is an architectural overview of how I would build a V80 inference engine to bridge that gap. We’ll call this concept the Dual-Tier Speculative Fabric with Dynamic Activation-Routed Fetching (DARF).

The V80 has roughly 673 MB of internal UltraRAM and Block RAM. We dedicate this entirely to a highly distilled, ultra-quantized "draft" model. The Concept: We train a tiny (e.g., 500M parameter) version of our target LLM and quantize it down to 1-bit or 1.5-bit (ternary) weights. This fits completely inside the on-chip SRAM. The Execution: Because all weights are on-chip, this draft model operates exactly like the Taalas HC1. It suffers zero HBM fetch latency and can spit out speculative tokens at blistering speeds using the Versal AI Engines.

This is where we solve the HBM bottleneck for the main, highly accurate model (e.g., an 8B parameter model) stored in the 32GB of HBM. FPGAs excel at scattered, irregular memory access—something that absolutely chokes GPUs. The Concept: LLMs have massive activation sparsity; during inference, many neurons in a layer simply output zero and don't contribute to the next layer. The Execution: We program custom memory controllers in the V80's FPGA fabric. Before a layer's computation begins, a tiny, predictive routing algorithm looks at the incoming token and determines which specific weights are actually needed. Instead of blindly streaming the entire 8B model matrix from HBM (which limits throughput), the custom memory controller performs fine-grained "scatter-gather" reads, fetching only the 15% to 20% of the weights that matter. By fetching 5x less data, we effectively multiply our 820 GB/s HBM bandwidth to act like a 4 TB/s connection.

Taalas uses custom 3-bit and 6-bit math. We will take this a step further by tailoring the math to the distribution of the specific model's weights. The Concept: Standard processors use linear formats (INT4, FP8). We use the V80's Look-Up Tables (LUTs) to create custom Logarithmic Number Systems (LNS) or uneven bit-widths (e.g., 3.2-bit precision). The Execution: The weights in HBM are packed in this custom format. When DARF streams them onto the chip, they are fed directly into custom-wired DSP slices that are physically synthesized for this exact, bizarre math. This packs significantly more model density into every single HBM read cycle.

GPUs process models temporally: load Layer 1, compute, store to memory; load Layer 2, compute, store to memory. The Concept: We treat the V80 not as an ALU, but as an assembly line. The Execution: We physically map the transformer architecture across the silicon. The HBM streams the custom-quantized weights continuously. Token data flows sequentially from the LUTs (doing layer normalization), into the AI Engines (doing the DARF-filtered matrix math), and out to the next physical sector of the chip. The token data never goes back to external memory until the final prediction is made. The Result: We use the SRAM Draft Core to generate 5-10 tokens instantly with zero memory latency (the Taalas party trick). We then use the DARF-enabled main model streaming from HBM to verify those tokens in one shot, but because we are only fetching a fraction of the weights using custom non-linear precision, the verification step takes a fraction of the usual time. You would still lose to Taalas on raw power efficiency (Joules per token), because powering HBM simply takes more energy than static SRAM. But in terms of Tokens Per Second, this architecture could push the V80 into the same neighborhood. If you were building this, which specific open-source model architecture (like Llama, Mistral, or a Mixture-of-Experts) do you think would be the best candidate to map onto this kind of highly sparse, physically pipelined FPGA setup? I responsded with: "I was thinking about maybe a Qwen3.5 9b or 4b at Q4 (or whatever would fit). Is this possible and what do you think the tokens per second would be using the architecture you described? " and it followed up with: It is absolutely possible, and those model sizes hit the sweet spot for the V80's memory capacity. Qwen models (whether we are talking about the current 2.5 generation or a hypothetical 3.5) have excellent architectural efficiency, making them great targets for this kind of hardware manipulation. Here is how we would map a Qwen 4B or 9B at Q4 precision onto our hypothetical "DARF" architecture, and the theoretical math to calculate the tokens per second (tok/s).

At 4-bit (Q4) quantization, the memory footprints are highly manageable: Qwen 4B at Q4: Requires roughly 2 GB of memory. Qwen 9B at Q4: Requires roughly 4.5 GB of memory. Both of these fit comfortably inside the V80's 32 GB of HBM2e. However, remember that our architecture uses a "Dual-Tier" system. Because the V80 only has ~673 MB of internal SRAM, the 4B and 9B models cannot act as the instantaneous Draft Core. The Setup: We would put the Qwen 4B or 9B into the HBM as the "Main Validator Model." We would then need to distill a tiny, sub-1-billion parameter Qwen variant (quantized to 1 or 2 bits so it fits under 600 MB) to live permanently on the SRAM as the "Speculative Draft Core."

In traditional LLM inference (at a batch size of 1), the absolute speed limit is dictated by memory bandwidth. The V80 has a massive HBM bandwidth of 820 GB/s. Step A: The Standard Baseline (No Custom Architecture) If we ran the V80 normally, fetching the whole model for every token: Qwen 4B: 820 GB/s ÷ 2 GB = 410 tok/s Qwen 9B: 820 GB/s ÷ 4.5 GB = 182 tok/s Step B: Applying the DARF Multiplier (Sparsity) Our Dynamic Activation-Routed Fetching intercepts the tokens and only fetches the weights that are mathematically active. If we assume a conservative 75% sparsity (meaning we only need to read 25% of the model from HBM per token), our effective bandwidth multiplies by 4. Qwen 4B with DARF: 410 tok/s × 4 = 1,640 tok/s Qwen 9B with DARF: 182 tok/s × 4 = 728 tok/s Step C: Applying the SRAM Draft Core (Speculative Decoding) While the HBM is doing its sparse fetching, our ultra-tiny Draft Core on the SRAM is instantly generating 3 to 5 speculative tokens ahead of time with zero memory latency. The Main Model just verifies them in a single batch. A highly tuned speculative decoding setup can yield a 2x to 2.5x speedup. We will use a conservative 2x multiplier. Qwen 4B Final: 1,640 tok/s × 2 = 3,280 tok/s Qwen 9B Final: 728 tok/s × 2 = 1,456 tok/s The Verdict Using the Alveo V80 with this custom, highly sparse spatial pipeline, you could theoretically achieve ~3,200 tok/s for the 4B model and ~1,400 tok/s for the 9B model at Q4. While Taalas aims for five-figure token rates, getting 1,400 to 3,200 tok/s on a single piece of commercially available hardware is still face-meltingly fast—easily 10x to 15x faster than running the same models on a high-end consumer GPU. If we were to actually attempt to synthesize this, the hardest bottleneck wouldn't be the AI math, but writing the custom memory controllers in Verilog/VHDL to handle those scattered reads. Of course, this could all just be a goading sycophantic hallucination by Gemini Pro, but I thought I would post it here to see if anyone had been looking into anything similar and had tried anything like this. Now I just need $9500 for an AMD Alveo V80 LOL [link] [comments] |

Thoughts on using an AMD Alveo V80 FPGA PCI card as a poor man’s Taalas HC1 (LLM-burned-onto-a-chip).

Reddit r/LocalLLaMA / 4/27/2026

💬 OpinionDeveloper Stack & InfrastructureSignals & Early TrendsModels & Research

Key Points

- The post speculates about using an AMD Xilinx Alveo V80 FPGA PCIe card to approximate the performance of Taalas HC1’s “LLM burned onto a chip” approach, mainly by leveraging FPGA-style acceleration.

- The author runs the idea by Gemini Pro for a feasibility check, which suggests a speculative-decoding-like FPGA setup could achieve roughly 3,200 tk/s for a Q4 4B model (or around 1,400 tk/s for a 9B model), while noting this would be below Taalas HC1 speeds.

- The post highlights a key tradeoff: once model weights are burned/embedded in hardware, the system is effectively locked to those weights, but the performance gains could still be worthwhile if the model is strong.

- Drawing from prior crypto-mining experience, the author compares ASICs (fast but single-algorithm) versus FPGAs (reprogrammable), framing this as a potential “reprogrammable ASIC” concept for LLM inference.

- The author shares curiosity about whether anyone has already attempted similar FPGA-based weight-burning or accelerator designs for LLM serving, and mentions the V80 card’s high cost (around $9,500).

Related Articles

Can Geometric Deep Learning lead eliminate the need of "Brute Force" pre-training [D]

Reddit r/MachineLearning

I Spent Weeks Reverse-Engineering OpenClaw. Here's What Nobody Tells You.

Dev.to

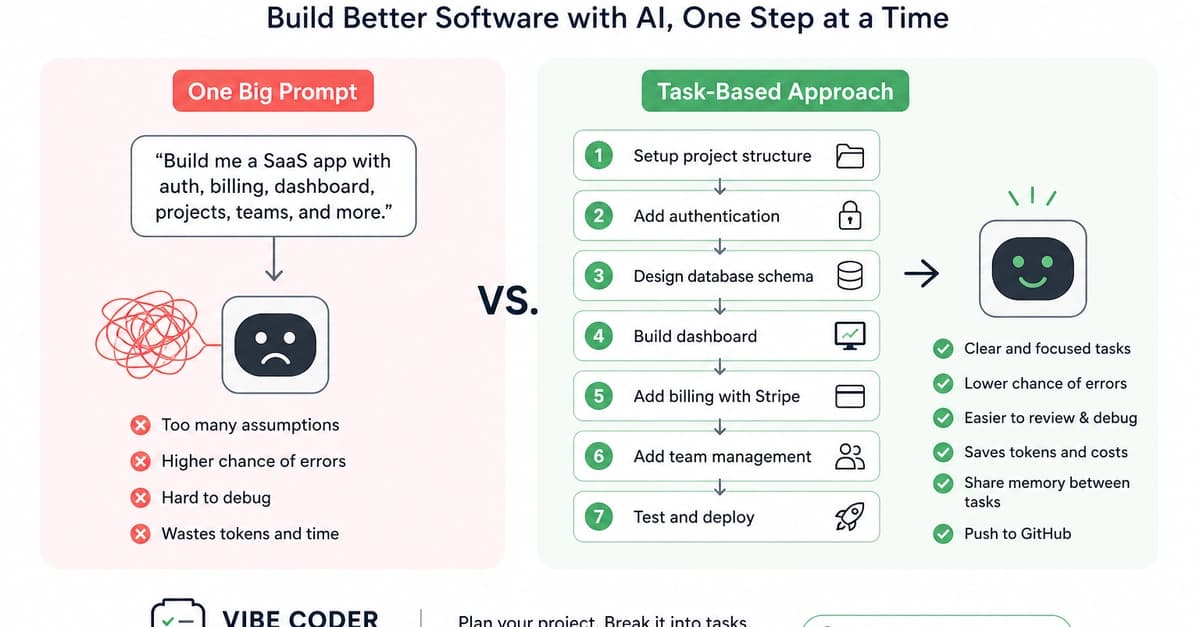

Why Task-Based Vibe Coding Is Better for Building Real Software Products

Dev.to

Programmers Becoming Product Managers

Dev.to

Probabilistic Graph Neural Inference for autonomous urban air mobility routing across multilingual stakeholder groups

Dev.to