| Today, I’m talking about a new research paper from Token AI: It introduces what could be one of the strongest optimizers, both in theory and in results. For years, we’ve relied on well-known optimizers like Adam, AdamW, LAMB, and others. No doubt, they’ve been the go-to choices when training AI models. If you’re not familiar with what an optimizer is, in simple terms: it’s a core part of training any AI model. It’s the algorithm responsible for updating the model’s weights during training to reduce the loss. That said, these optimizers come with limitations that affect training. For example, Adam uses a fixed beta1 throughout training, which can carry outdated momentum and keep pushing the model in the wrong direction. STAM addresses this by measuring the difference between the current gradient and previous momentum (g - m). When the difference is large, it reduces beta1, leading to more stable training during noisy phases. Another issue appears when there’s a shift or noise in training. Old momentum can become harmful. STAM handles this with an adaptive beta1 based on residual variance. A major issue in SGD is that if the direction becomes wrong, it keeps going due to fixed momentum. STAM solves this by allowing the first momentum to self-correct. Now let’s talk about STAMLite, the lighter version. It’s designed to replace AdamW as a default choice in many cases. The key difference is that beta1 is dynamic instead of fixed:

It also improves efficiency in terms of optimizer state memory:

In practice, STAMLite saves around 50% of the resources compared to AdamW and STAM, meaning significantly less GPU usage during training. Looking at benchmarks, the results speak for themselves. In Hyperparameter Sweep, STAMLite achieved: In Long-Horizon Non-Stationary MLP, STAM ranked first alongside NAdam with nearly identical results: More benchmarks are available on the website and in the research paper. This is an important step from TokenAI, breaking the long-standing reliance on a limited set of optimizers that come with known issues. Even as an early release, it proves strong and promising. Personally, I’ve already shifted to STAM and I’m currently training my first full LLM from scratch using it. I’ll be sharing the results soon. Research paper: Let me know what you think. [link] [comments] |

A new generation of AI models and one of the most powerful research papers out there.

Reddit r/LocalLLaMA / 5/8/2026

💬 OpinionDeveloper Stack & InfrastructureModels & Research

Key Points

- Token AIの研究論文「Stable Training with Adaptive Momentum(STAM)」が、従来のAdam系最適化手法の制約を踏まえた新しい最適化器を提案している。

- STAMは、現在の勾配と過去のモーメンタムの差(g-m)や残差の分散などを手がかりにβ1(モーメンタム係数)を適応的に調整し、ノイズが多い学習フェーズでも学習を安定化させる。

- 軽量版の「STAMLite」は、デフォルト最適化器としてAdamWの置き換えを狙い、β1を動的にする一方でオプティマイザ状態のメモリ効率を改善してGPU使用量を大きく削減する。

- 実運用面では、STAMLiteがAdamWやSTAMと比べて必要リソースを抑えつつ、ベンチマーク(ハイパーパラメータスイープ等)で競争力のある精度・損失を示すことが述べられている。

Related Articles

Helping ChatGPT better recognize context in sensitive conversations

Dev.to

The Code You Shipped Yesterday Won't Scale Tomorrow, Here's Why

Dev.to

**📈 I just launched NeuroArchAI Platform – and it's completely FREE on GitHub right now.**

Dev.to

From One-on-One to One-to-Many: Scaling Your Coaching with AI

Dev.to

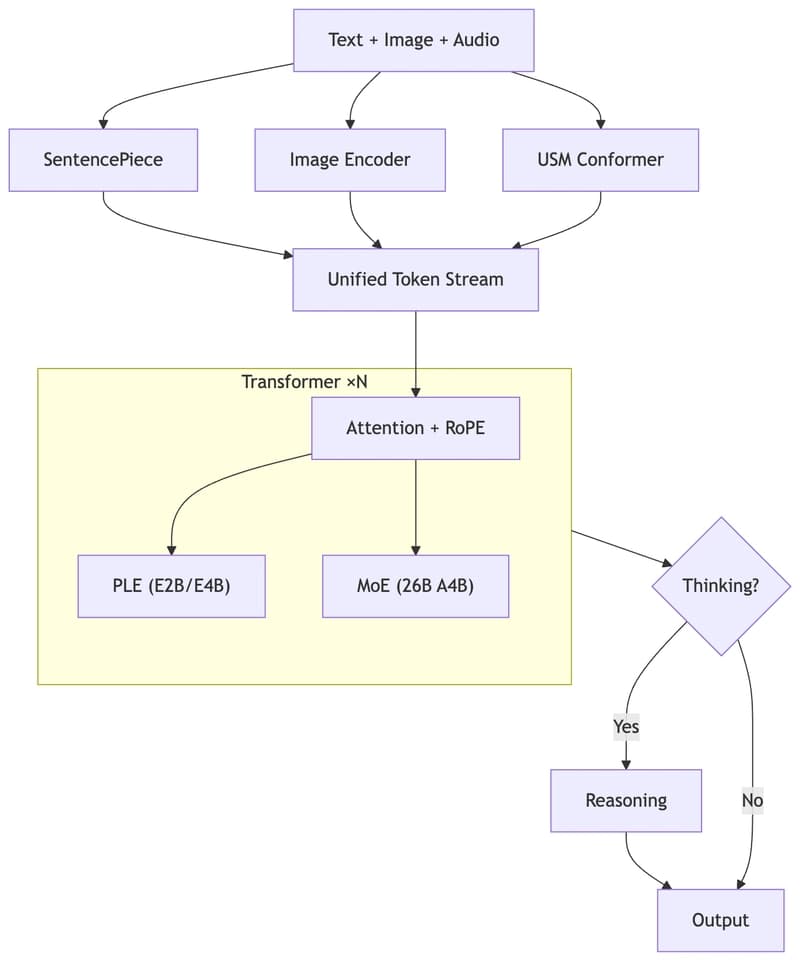

Run Gemma 4 on Your Laptop — A Hands-On Guide to Google's Latest Open Multimodal LLM

Dev.to