On-Device Vision Training, Deployment, and Inference on a Thumb-Sized Microcontroller

arXiv cs.LG / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- The paper proposes an end-to-end on-device vision ML pipeline that covers data acquisition, training of a small two-layer CNN with Adam optimization, and real-time inference, all running directly on a microcontroller-class device costing $15–40.

- It reports efficient deployment and inference on the Seeed Studio ESP32-S3 XIAO ML Kit (8 MB PSRAM), including 64×64 three-class image classification with ~9 minutes per training run and ~6.3 FPS inference.

- The authors emphasize practical microcontroller engineering by providing mechanisms such as correct batch-level gradient accumulation, precomputed resize lookup tables, PSRAM-aware memory management, and a single-constant network reconfiguration interface.

- For deployment convenience without SD cards, the system supports baked-in weight export using a dual-format scheme and an automated three-tier weight priority resolution at boot (SD binary > baked-in header > He initialization).

- All source code and reference datasets are released under the MIT License, aiming to make the full ML lifecycle transparent and reproducible in a compact C++ implementation (~1,750 lines, Arduino IDE build < 1 minute).

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

I Build Systems, Flip Land, and Drop Trap Music — Meet Tyler Moncrieff aka Father Dust

Dev.to

Whatsapp AI booking system in one prompt in 5 minutes

Dev.to

v0.22.1

Ollama Releases

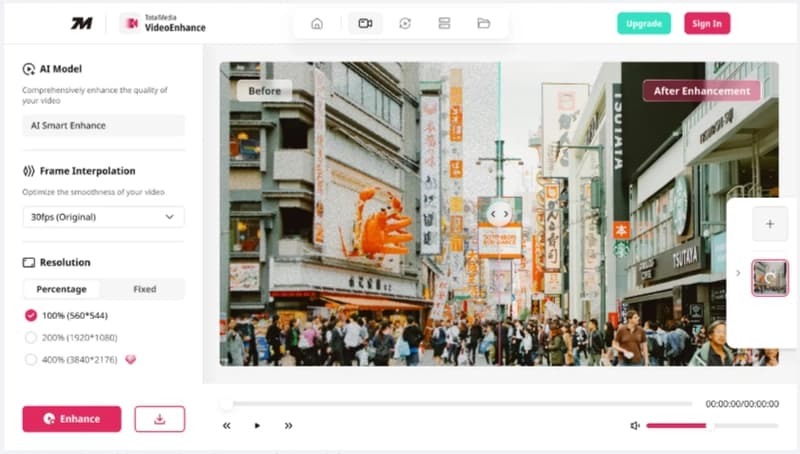

Launching TotalMedia: A Simpler Way to Fix and Convert Video Files

Dev.to

The best of Cloud Next '26: Gemini Enterprise Agent Platform. The perfect combination of Intelligence and Automation to generate VALUE.

Dev.to