MeshLAM: Feed-Forward One-Shot Animatable Textured Mesh Avatar Reconstruction

arXiv cs.CV / 4/28/2026

📰 NewsDeveloper Stack & InfrastructureModels & Research

Key Points

- MeshLAM is a feed-forward framework that reconstructs a high-fidelity, animatable 3D textured head avatar from a single image in one forward pass.

- The method avoids prior approaches’ heavy test-time optimization and multi-view requirements by using a dual shape/texture map architecture driven by a shared transformer backbone.

- MeshLAM introduces an iterative GRU-based decoder with progressive geometry deformation and texture refinement to prevent mesh collapse and maintain topological integrity during deformation.

- It also uses a reprojection-based texture guidance mechanism to anchor appearance learning to the input image, improving coherence of the reconstructed textures.

- Experiments on reconstruction quality, animation capability, and computational efficiency indicate MeshLAM outperforms existing state-of-the-art methods.

Related Articles

I Build Systems, Flip Land, and Drop Trap Music — Meet Tyler Moncrieff aka Father Dust

Dev.to

Whatsapp AI booking system in one prompt in 5 minutes

Dev.to

v0.22.1

Ollama Releases

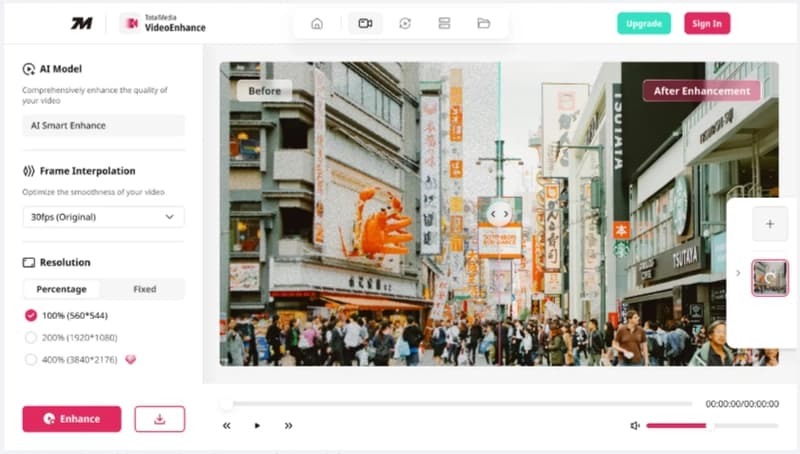

Launching TotalMedia: A Simpler Way to Fix and Convert Video Files

Dev.to

The best of Cloud Next '26: Gemini Enterprise Agent Platform. The perfect combination of Intelligence and Automation to generate VALUE.

Dev.to