As a technical writer leveraging AI for API documentation, you face a critical challenge: how can you confidently verify code snippets you didn’t write? Blind trust is not an option, but you don’t need a computer science degree to implement smart safeguards.

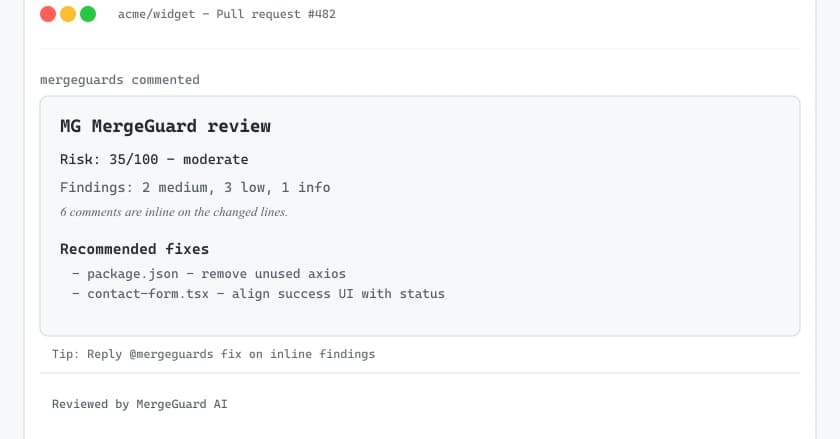

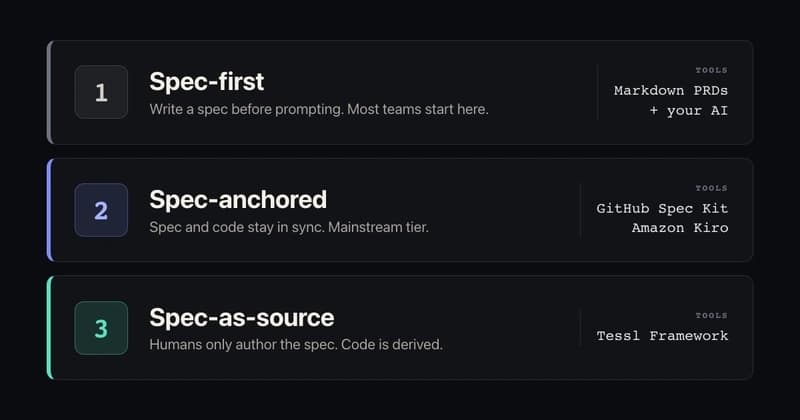

The Principle: Systematic, Automated Verification

The core principle for non-developers is to establish a simple, repeatable system of automated checks. Your role isn’t to debug complex logic but to gatekeep quality using accessible tools that catch common errors before snippets reach your documentation. This shifts your focus from understanding every line of code to managing a validation pipeline.

Tool in Action: ESLint for JavaScript

For JavaScript snippets, a primary tool is ESLint. It’s a static code analysis tool that checks your AI-generated code for syntax errors, problematic patterns, and style inconsistencies. You don't need to configure it deeply; a basic setup integrated into your workflow can instantly flag obvious issues like missing brackets or undeclared variables, acting as your first line of defense.

Scenario in Practice

Imagine you’ve generated a cURL snippet for a new API endpoint. Before publishing, you paste it into a command-line sandbox using test credentials. A failed run due to a malformed header flag is immediately apparent, allowing you to request a precise correction from your AI tool.

Three High-Level Implementation Steps

- Integrate Linting: For each primary language you document (e.g., JavaScript, Python), identify one linter or formatter (like ESLint or Black). Use simple online versions or basic local scripts to scan every generated snippet automatically.

- Leverage Sandbox Environments: Always execute snippets in isolated, safe environments like API sandboxes or code playgrounds (e.g., Replit, CodeSandbox). This tests runtime behavior without any risk to live systems.

- Compile When Possible: For compiled languages like Java, use the basic compilation command (

javac) on a simplified test file containing the snippet. Any compiler error provides a clear, actionable message to feed back into your AI workflow for regeneration.

Key Takeaways

Your expertise as a technical writer lies in curating and validating content, not in writing code from scratch. By embedding a few automated tools—linters for static analysis, sandboxes for safe execution, and compilers for syntax checking—you build a robust validation layer. This systematic approach ensures the AI-generated code you deliver is functionally sound and professionally vetted, significantly boosting your credibility and the reliability of your documentation.