submitted by /u/TheBlueMatt

[link] [comments]

mesa PR with 37-130% llama.cpp pp perf gain for vulkan on Linux on Intel Xe2

Reddit r/LocalLLaMA / 4/27/2026

💬 OpinionDeveloper Stack & InfrastructureSignals & Early Trends

Key Points

- A Mesa merge request proposes Vulkan performance improvements for running llama.cpp workloads on Linux.

- Reported performance gains range from 37% to 130% on Intel Xe2 GPUs/graphics under the tested setup.

- The change is delivered via the Mesa graphics stack, aiming to better accelerate the relevant compute paths through Vulkan.

- The update is primarily relevant to developers and users running local LLM inference via llama.cpp on Linux with Intel Xe2-class hardware.

💡 Insights using this article

This article is featured in our daily AI news digest — key takeaways and action items at a glance.

Related Articles

I Spent Weeks Reverse-Engineering OpenClaw. Here's What Nobody Tells You.

Dev.to

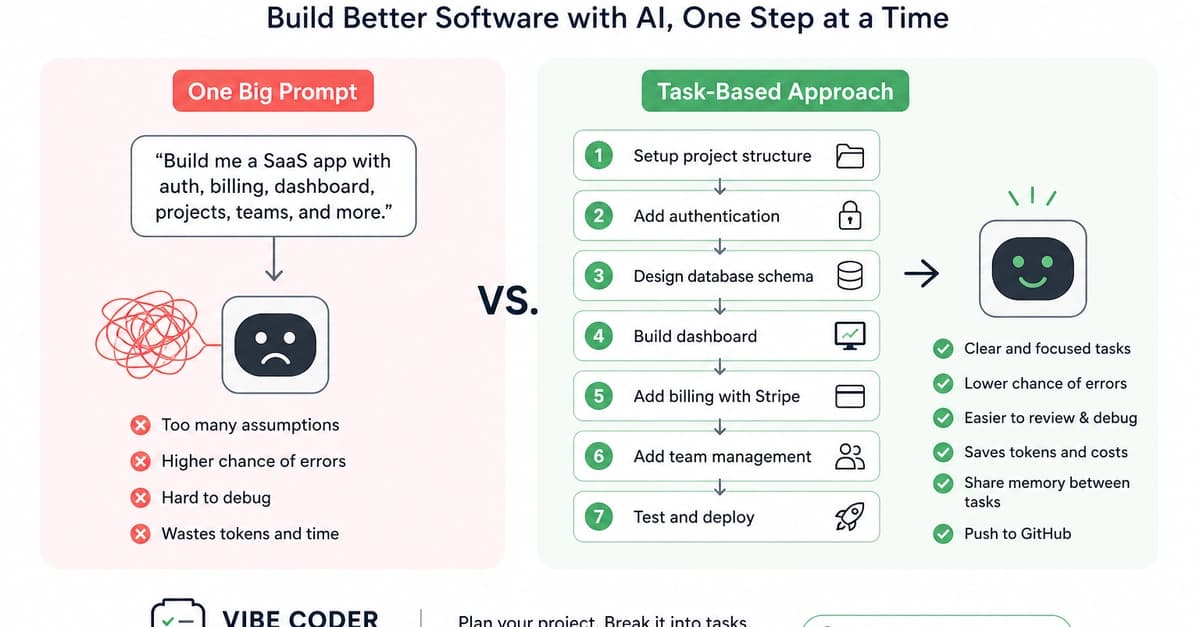

Why Task-Based Vibe Coding Is Better for Building Real Software Products

Dev.to

Programmers Becoming Product Managers

Dev.to

Your Data Doppelgänger is Already Here.

Dev.to

Using Claude Code With NVIDIA Build’s Free Models

Dev.to